Avoid unnecessary work.

Or think about data properties in addition to values.

Distances are frequently used in life and programming challenges. To make the discussion concrete and illustrate the point of the article, let’s say we’d like to enjoy these beautiful warm days in the closest park. We’ll also use Euclidean distance

since fortunately we have a dragon at our disposal that will happily fly us to the destination.

This problem can be trivially solved using a linear pass over all points and keeping track of the smallest distance:

This solution is correct, but is it efficient? Well, we cannot do any better than O(N) in terms of time complexity, since we most certainly want to consider all available parks.

In the best case scenario, we may already be in one of the parks, in which case the distance would be 0 and function can return early having to perform only a single computation. Unfortunately, this does not affect worst-time complexity that remains O(N).

While asymptotic complexity is extremely useful to compare different approaches and for large enough inputs has the largest impact on performance, in practice constant factors that are part of the computation matter as well. In our case, such computation is distance, which has O(1) asymptotic complexity. So theoreticians can declare victory and move on, but we, being performance enthusiasts, we’ll keep digging and ask a fair question - do we really care about the distance to each park? As a matter of fact, we don’t - all we need is to have a way to compare different park candidates and any function f satisfying a property for all p1, p2 in parks d(o, p1) < d(o, p2) -> f(o, p1) < f(o, p2) works for our purposes.

Since we live in a world where negative distances are fairly rare, it’s safe to assume that if d = sqrt(v) and d1 < d2 then d1*d1 < d2*d2. This property allows us to use

As a function for comparing distances to different parks instead of more familiar Euclidean distance

This replacement has a number of benefits, most important of which are:

no need to perform an expensive square root computation

no loss of precision

integer instructions instead of more expensive floating point

Having theory on our side, it’s time to put it to the test and measure the computation time difference between distance and distance_squared functions

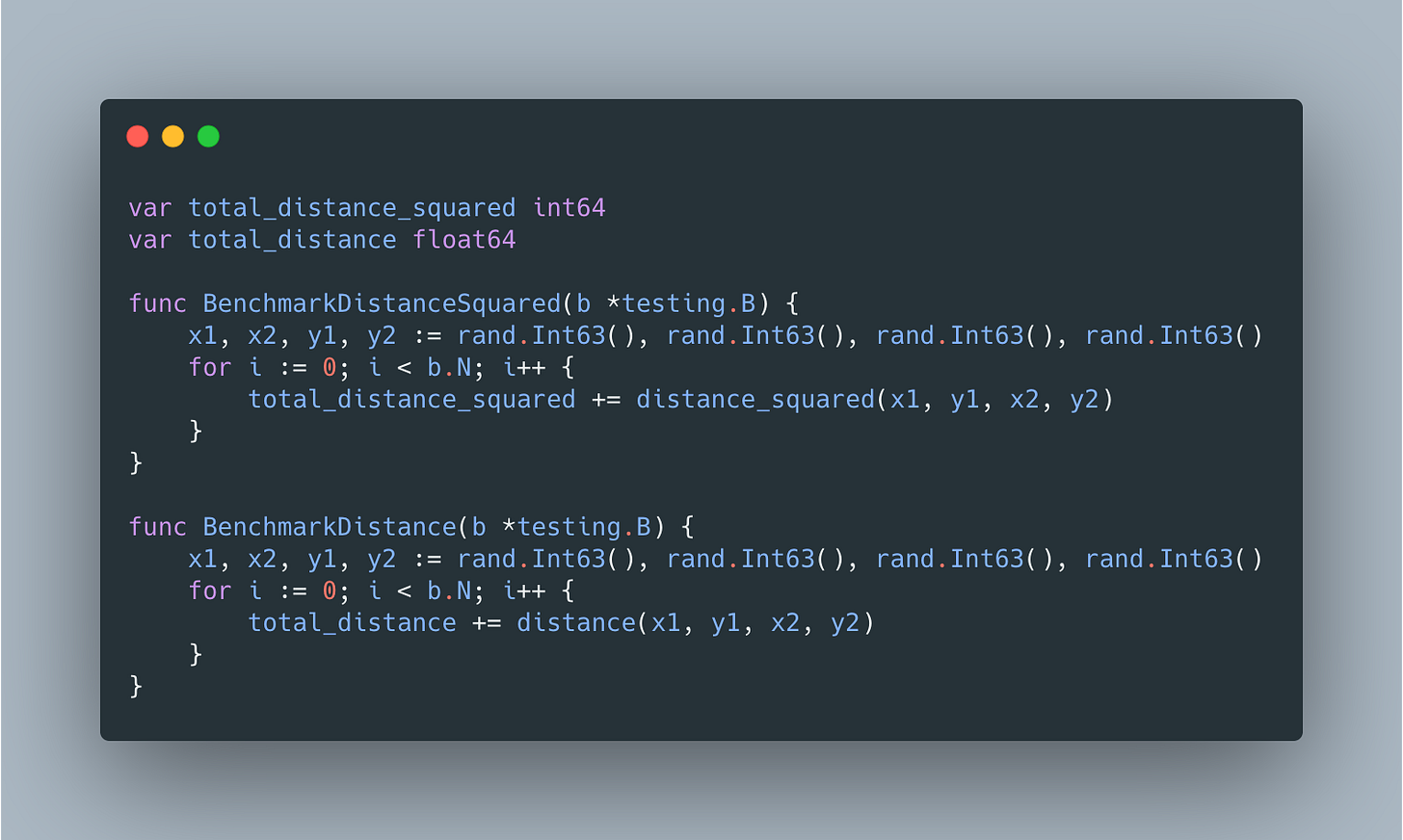

Following straightforward benchmarks will be used to get the data

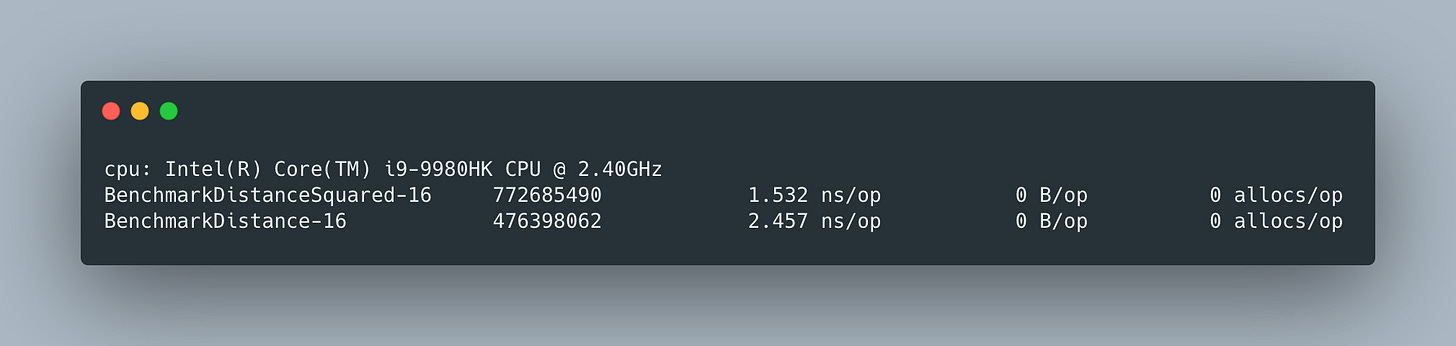

And as expected, the distance_squared is ~40% faster

and most of the difference can be attributed to 2 extra instructions responsible for int64 to float64 conversion and square root computation

You can check explore full assembly and optimizations using Go’s playground.

![Squared Euclidean distance - Hands-On Image Processing with Python [Book] Squared Euclidean distance - Hands-On Image Processing with Python [Book]](https://substackcdn.com/image/fetch/$s_!D5ea!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fbucketeer-e05bbc84-baa3-437e-9518-adb32be77984.s3.amazonaws.com%2Fpublic%2Fimages%2Fb3baeb2d-12ec-422b-9b5f-7d52643596c9_1910x580.png)