Constructing priority heap efficiently.

Or why it's ok to ask about time complexity during coding interviews.

A good chunk of coding interview questions involve using priority heaps in one way or another. For example, search engines and LLM chats use word embeddings to find K closest vectors in the database. The most straightforward and not necessarily efficient way to do this precisely is to create a min-heap with distances from the query to stored vectors and pop K out of it. Another frequent use-case is finding the k-th order statistic, where priority queue is an easy way to get O(klogn) time complexity without having to hope for O(n) average time of quick-select.

Interestingly, almost always when I ask time complexity of algorithms that use priority queues, candidates insist on O(nlogn) as a time complexity for creating a heap, which is wrong! Apparently this mistake is so frequent that even Google’s Bard makes it:

The time complexity of creating a priority heap is O(n log n), where n is the number of elements in the array. This is because the heapifying process takes O(log n) time for each element in the array, and there are n elements in the array.

Fortunately C++ doesn’t repeat this mistake and std::make_heap has a following guarantee:

Complexity

At most 3 * std::distance(first, last) comparisons.

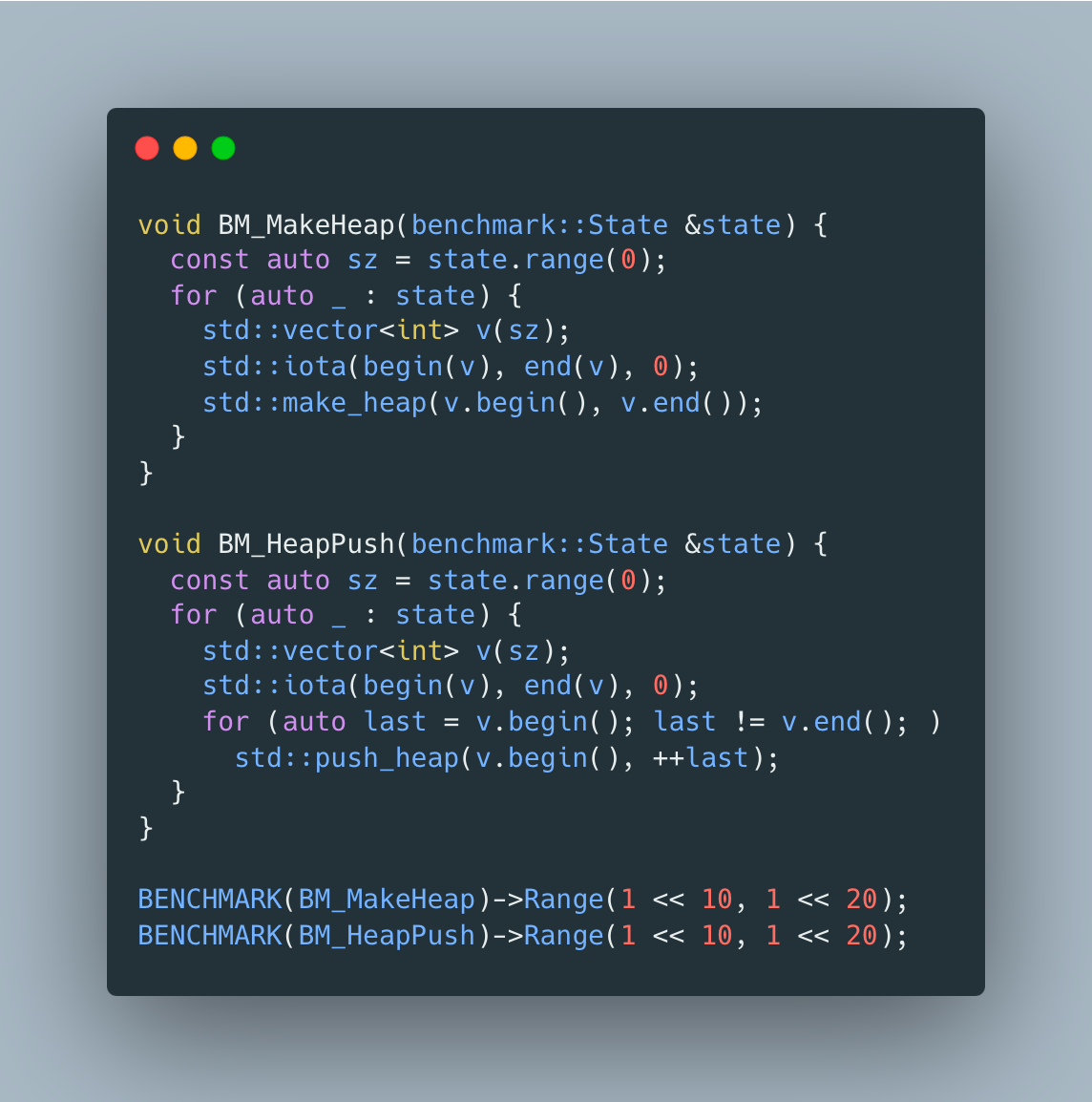

And before you question whether it matters in practice, let’s compare how much time it takes to create a priority heap using std::push_heap vs a std::make_heap

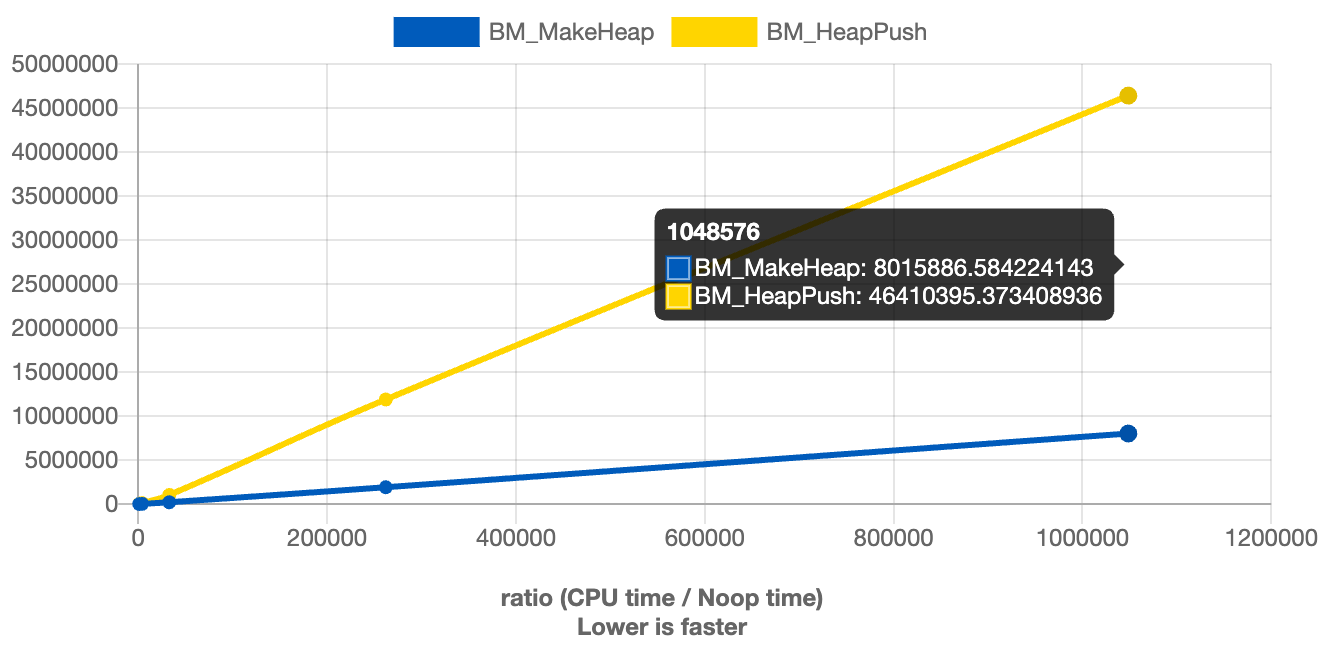

And a picture is worth a thousand words

So next time when designing an algorithm or a data structure, consider adding the API for bulk operations - even if there is no way to improve complexity, oftentimes it gives a chance to reserve enough memory for internal data structures to avoid frequent reallocations.

nice!