Faster math in Rust?

Or compiler intrinsics FTW!

Compiler intrinsics are functions that are provided by the compiler to give programmers direct access to low-level machine instructions. This can be useful for performance-critical code, as it allows the programmer to write code that is specifically optimized for the target processor.

Intrinsics are typically implemented as inline functions, which means that the compiler will replace the intrinsic call with the actual machine instructions at compile time. This allows the compiler to optimize the intrinsic call for the target processor, and it also eliminates the overhead of function call and return.

For the reasons above and many more I love casually browsing intrinsics documentation for different languages. Last time I was reading about Rust intrinsics I noticed 4 functions with suffix _fast that seem particularly fitting for our quest for improving performance. Let’s take a look at fadd_fast for example. Its documentation is somewhat sparse

Float addition that allows optimizations based on algebraic rules. May assume inputs are finite.

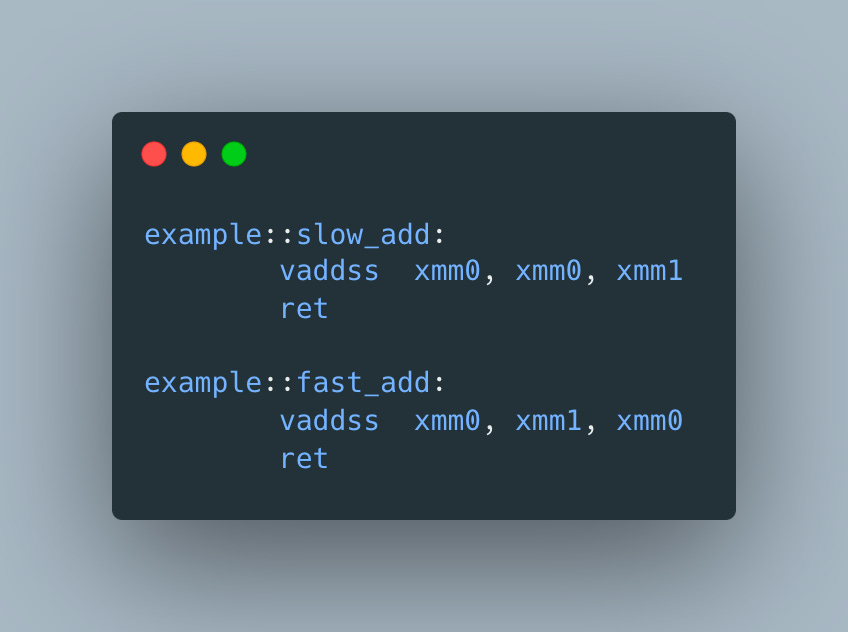

so to better understand how we can use it, let’s do a little investigation. We’ll start with

and look at the generated assembly

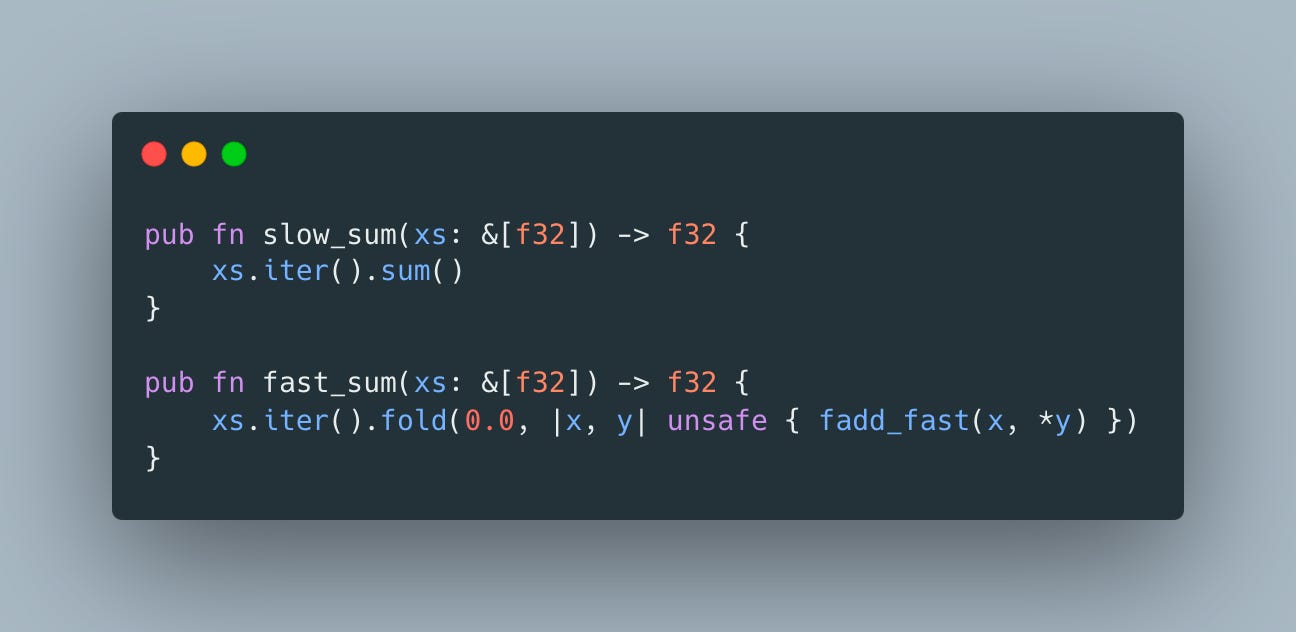

Apart from the register order it doesn’t seem like there is any difference, so it doesn’t seem like it’s going to deliver on its “fast” promise. But wait, what if by “algebraic rules” documentation means that compiler would treat addition for f32 as associative, which is not the case by default. To test our hypothesis, let’s see what happens when many f32s are added together

Now we’re talking!

The ymm* instead of xmm* looks very promising but how much difference does it make in practice?

| #![feature(core_intrinsics)] | |

| use std::intrinsics::fadd_fast; | |

| use criterion::{criterion_group, criterion_main, Criterion}; | |

| const XS: &'static [f32] = &[3.14; 1000]; | |

| fn slow_sum(xs: &[f32]) -> f32 { | |

| xs.iter().sum() | |

| } | |

| fn fast_sum(xs: &[f32]) -> f32 { | |

| xs.iter().fold(0.0, |x, y| unsafe { fadd_fast(x, *y) }) | |

| } | |

| fn bench_sums(c: &mut Criterion) { | |

| let mut group = c.benchmark_group("f32 sum"); | |

| group.bench_function("slow sum", |b| b.iter(|| slow_sum(XS))); | |

| group.bench_function("fast sum", |b| b.iter(|| fast_sum(XS))); | |

| group.finish(); | |

| } | |

| criterion_group!(benches, bench_sums); | |

| criterion_main!(benches); |

Even though my M1 macbook air is not a perfect benchmark machine, the results don’t leave much room for interpretation

We can get similar results in C/C++ by adding -ffast-math compiler flag, but it’s overly coarse grained and moves a very important decision from source code to build configuration.

So if you’re using Rust nightly, don’t mind using unstable features, accept that floating point arithmetic is not perfect and like performance, consider using one of the *_fast intrinsics.