Faster reservoir sampling.

Or taking advantage of better algorithms.

Reservoir sampling is a fundamental technique in computer science used for selecting a random sample of items from a large dataset, especially when the size of the dataset is unknown or too large to fit into memory at once. It plays a crucial role in various applications, including data analysis, machine learning, and algorithm design.

The importance of reservoir sampling lies in its ability to provide a truly random and unbiased sample, regardless of the dataset's size. This characteristic is particularly valuable when dealing with data streams, massive databases, or situations where you need to maintain the statistical integrity of your sample. Reservoir sampling ensures that every item in the dataset has an equal chance of being included in the sample, making it an indispensable tool for designing efficient and accurate algorithms in computer science and data analysis. Whether you're working with big data or designing randomized algorithms, reservoir sampling is a key technique to ensure the robustness and reliability of your results.

In fact it’s so important that it used to be a popular coding interview question at largest tech companies. Fortunately its most popular implementation is fairly trivial

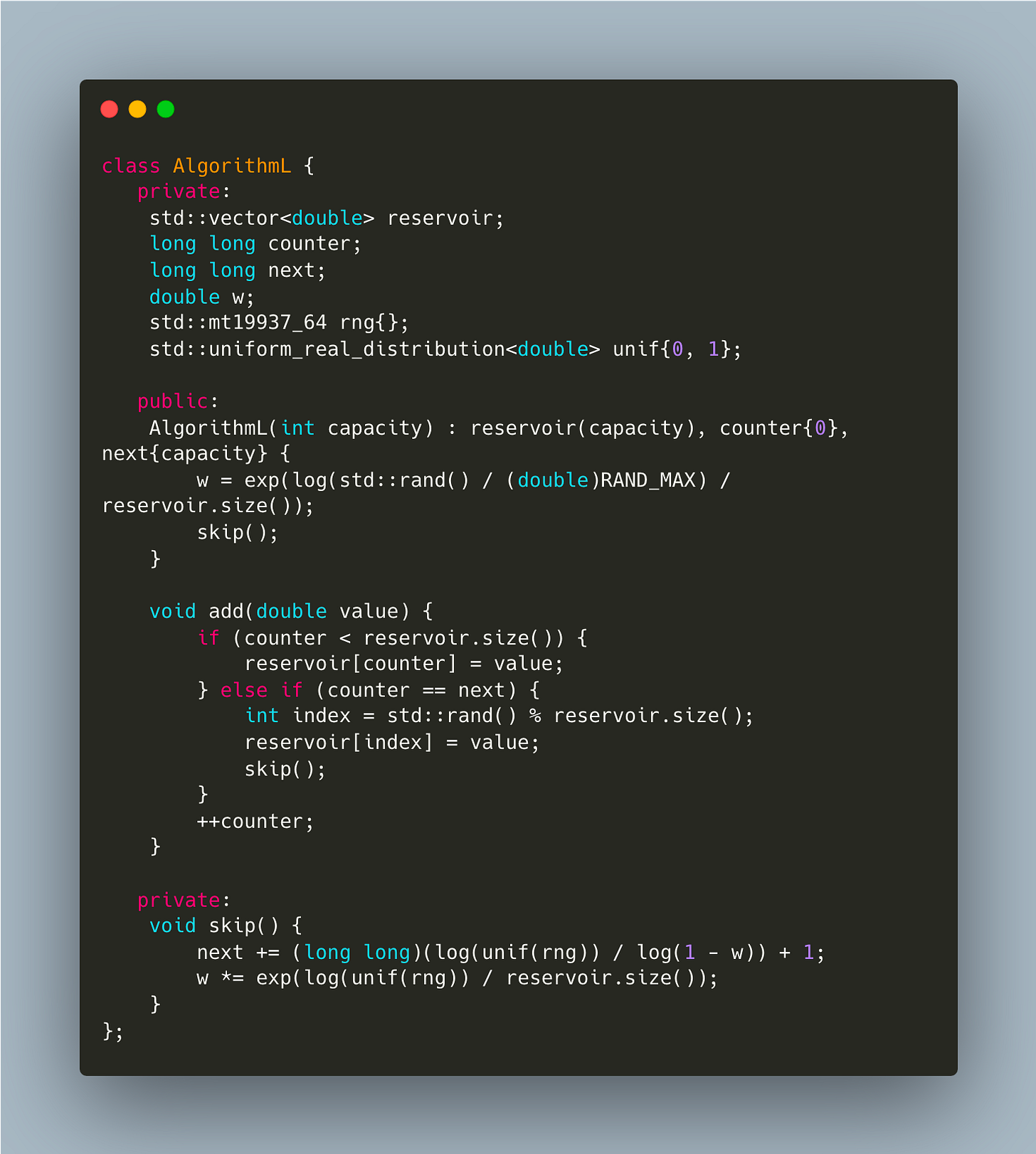

It’s easy to notice that random number has to be generated for every element from the stream after reservoir is filled. This means that if n » k random generator calls are likely to impact overall performance. What if instead of throwing a dice for every element from the stream to see if it should end up in the reservoir, we could somehow come up with a strategy of skipping certain number of source elements before accepting one into the reservoir. Turns out there is a way. Theory behind such algorithms is tricky but well covered in other articles, so here I’ll only consider AlgorithmL because of its simplicity

It’s certainly more involved but not much more code. Is this extra complexity justified though? Let’s see

| #include <algorithm> | |

| #include <cmath> | |

| #include <iostream> | |

| #include <iterator> | |

| #include <random> | |

| #include <vector> | |

| class AlgorithmL { | |

| private: | |

| std::vector<double> reservoir; | |

| long long counter; | |

| long long next; | |

| double w; | |

| std::mt19937_64 rng{}; | |

| std::uniform_real_distribution<double> unif{0, 1}; | |

| public: | |

| AlgorithmL(int capacity) : reservoir(capacity), counter{0}, next{capacity} { | |

| w = exp(log(std::rand() / (double)RAND_MAX) / reservoir.size()); | |

| skip(); | |

| } | |

| void add(double value) { | |

| if (counter < reservoir.size()) { | |

| reservoir[counter] = value; | |

| } else if (counter == next) { | |

| int index = std::rand() % reservoir.size(); | |

| reservoir[index] = value; | |

| skip(); | |

| } | |

| ++counter; | |

| } | |

| auto sample() { return reservoir; } | |

| private: | |

| void skip() { | |

| next += (long long)(log(unif(rng)) / log(1 - w)) + 1; | |

| w *= exp(log(unif(rng)) / reservoir.size()); | |

| } | |

| }; | |

| class AlgorithmR { | |

| private: | |

| std::vector<double> reservoir; | |

| long long counter; | |

| public: | |

| AlgorithmR(int capacity) : reservoir(capacity), counter{0} {} | |

| void add(double value) { | |

| if (counter < reservoir.size()) { | |

| reservoir[counter] = value; | |

| } else { | |

| long long replacementIndex = std::rand() % counter; | |

| if (replacementIndex < reservoir.size()) { | |

| reservoir[replacementIndex] = value; | |

| } | |

| } | |

| ++counter; | |

| } | |

| auto sample() { return reservoir; } | |

| }; | |

| static void BH_algoR(benchmark::State& state) { | |

| auto k = static_cast<int>(state.range(0)); | |

| AlgorithmR algoR{k}; | |

| double d = 0.0; | |

| for (auto _ : state) { | |

| algoR.add(d++); | |

| } | |

| } | |

| BENCHMARK(BH_algoR)->Arg(5)->Arg(20)->Arg(200)->Arg(1000)->Arg(10000); | |

| static void BH_algoL(benchmark::State& state) { | |

| auto k = static_cast<int>(state.range(0)); | |

| AlgorithmL algoL{k}; | |

| double d = 0.0; | |

| for (auto _ : state) { | |

| algoL.add(d++); | |

| } | |

| } | |

| BENCHMARK(BH_algoL)->Arg(5)->Arg(20)->Arg(200)->Arg(1000)->Arg(10000); |

and the results look very promising with ~20X speedup.

Better algorithms can bring impressive wins, so it never hurts to follow the latest research papers.