Most dynamic programming languages come with convenient abstractions that make our code shorter and easier to understand, but, unlike C++ and Rust which promise zero-cost abstractions, also come with additional performance overhead. Does this have to be the case though? We are used to nice things being expensive, but let’s see if it holds in Python.

To make things more concrete, let’s take a very simple problem of summing up first n numbers. The first solution that comes to mind is to use a for loop

In fact I’ve used 2 versions, since I wanted to make sure that n+1 passed to a range does not affect performance.

We can do the same using a while loop

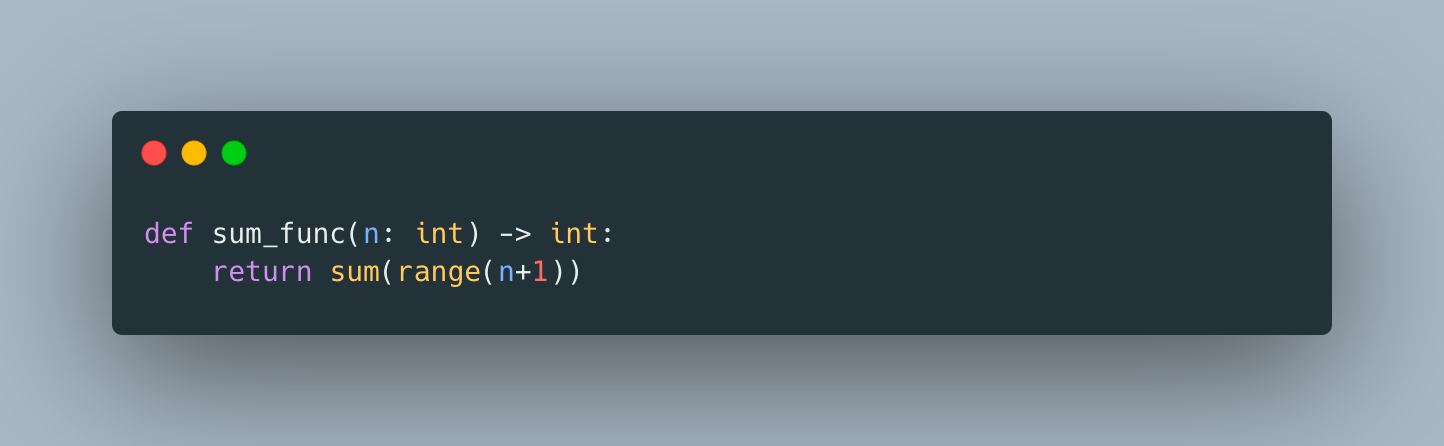

We can also use a built-in sum function

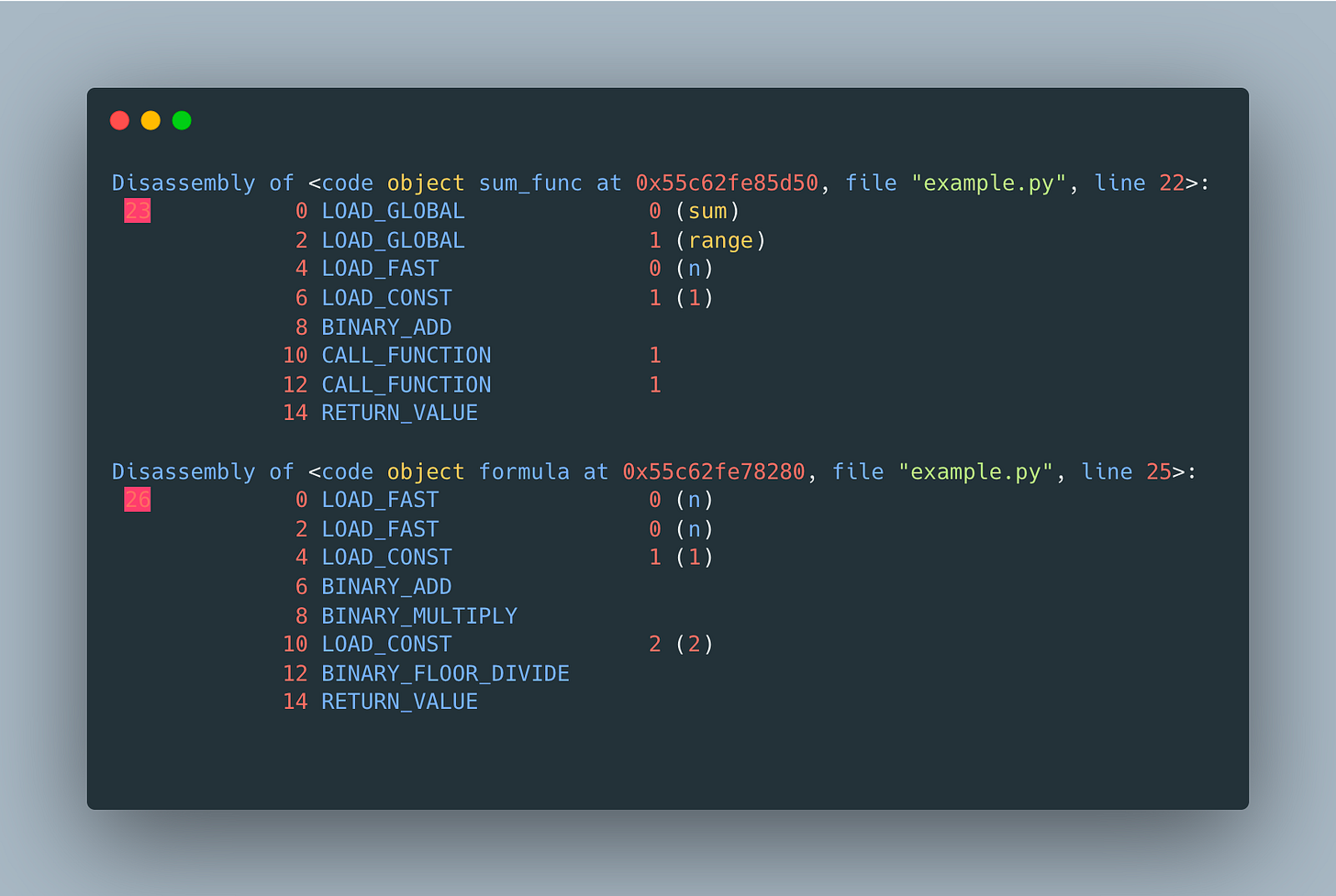

And, for completeness' sake, we can use mathematics

Generated bytecode instruction count seems to be proportional to the number of Python lines, with sum and formula based being the shortest

followed by for loop based solutions

and while loop based solution being the longest one

Now it’s time to get the numbers

As expected, formula based solution turned out to be the fastest one - after all it does not involve any iteration. But the fact that while loop based solution is ~2X slower is not something I’d expect, despite its bytecode instruction count being longer.

The reason for this performance difference is even easier to understand after observing that using built-in sum function is almost 3X faster than the for loop based solution. The secret is in the fact that abstractions like range iterator and sum function are implemented in C, whereas bytecode instructions for the while loop based solution are implemented in Python, which is significantly slower.

So what’s the moral of a story?

Use mathematics to avoid iteration (cheat)

Leverage built-in functions whenever possible

Using high-level abstractions that can capture the intent may often be backed by C, delivering both ergonomics and outstanding performance