Fusing math.Sqrt calls.

Or simple mathematical properties for better perf and precision.

As promised, in this article we continue improving Weaviate database. Vector similarity search is a technique for finding the most similar vectors to a given query vector in a vector database. Vectors are typically used to represent data such as text, images, and audio. The similarity of two vectors is typically measured using a distance metric, such as the Euclidean distance, the Manhattan distance, or the cosine similarity. Today we’re going to look at cosine similarity implementation

Since it directly implements cosine similarity formula

it may seem like there isn’t much for us to do. But let’s refresh some of the sweet memories from school. In particular

Let’s apply this simple rule to cosine similarity implementation above

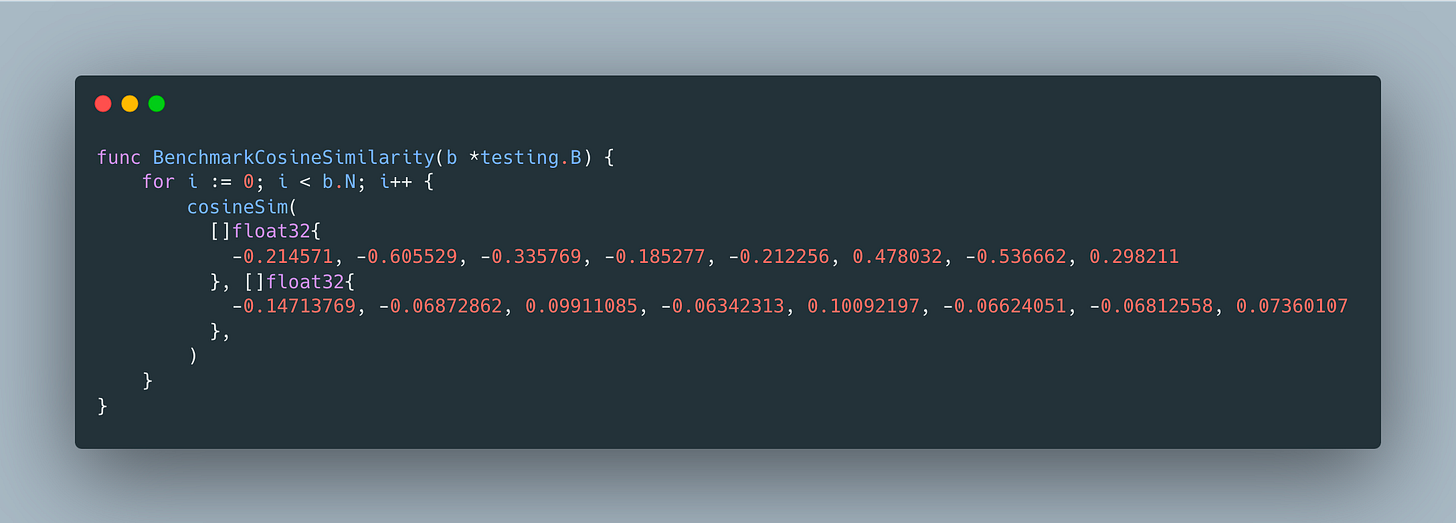

We’ll use the following benchmark to see if makes a difference

And sure enough, since the cost is dominated by processing vectors, this optimization gives only marginal improvement from

BenchmarkCosineSimilarity-8 78623644 15.27 ns/op 0 B/op 0 allocs/opto

BenchmarkCosineSimilarity-8 67631196 15.13 ns/op 0 B/op 0 allocs/opBut wait, there is more - every operation on floating point numbers adds error, especially fairly complex functions like math.Sqrt, so our optimization also improves precision. The only gotcha is that it technically reduces the range of numbers we support, since x * y may technically get outside of float64’s range, but given that the max float64 is 1.7976931348623157e+308, I’m willing to take my chances. What about you?