Growing queues faster in go.

Or copy vs %.

Dynamic data structures have a size that can change at runtime. This means that the data structure can grow or shrink as needed to accommodate the data that is being stored. They are often used when the size of the data set is not known in advance, such as in the case of a stack, queue etc.

Today we’re going to look at what happens with the queue in golang when newly enqueued element no longer fits into existing storage slice.

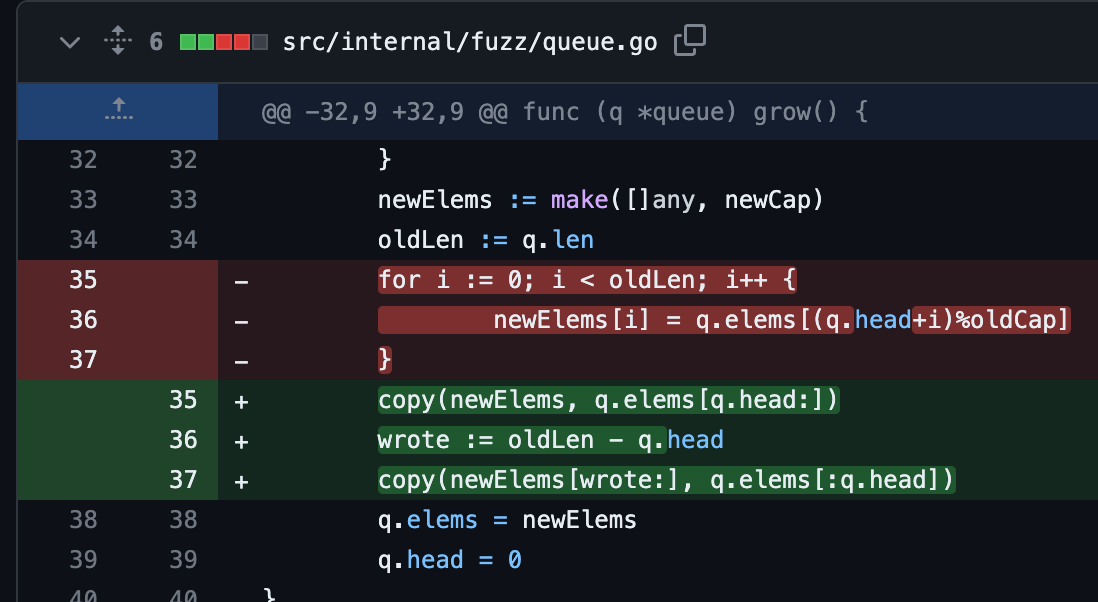

In particular note that since this queue implementation is using ring buffer under the hood, q.head points at the spot where queue “starts”. Because of this, when copying elements into newly allocated storage, % is used to recompute element indices for the new head 0.

It’s not a secret that % is relatively expensive, so I started to wonder about its impact on performance. Technically it’s not needed - we just need to copy the elements in range [q.head..len(q)) followed by [len(q)-q.head..q.head) into newly allocated storage.

In my opinion it’s at least as readable and leverages built-in copy. But what about performance? To find out we’ll use

| package queue | |

| import ( | |

| "reflect" | |

| "testing" | |

| ) | |

| func copyUsingMod(elems []int, head int) []int { | |

| oldLen := len(elems) | |

| newElems := make([]int, oldLen) | |

| for i := 0; i < oldLen; i++ { | |

| newElems[i] = elems[(head+i)%oldLen] | |

| } | |

| return newElems | |

| } | |

| func copyUsingCopy(elems []int, head int) []int { | |

| oldLen := len(elems) | |

| newElems := make([]int, oldLen) | |

| copy(newElems, elems[head:]) | |

| wrote := oldLen - head | |

| copy(newElems[wrote:], elems[:head]) | |

| return newElems | |

| } | |

| func TestModCopy(t *testing.T) { | |

| if !reflect.DeepEqual(copyUsingMod([]int{1}, 0), []int{1}) { | |

| t.Error("1") | |

| } | |

| if !reflect.DeepEqual(copyUsingMod([]int{1, 2}, 0), []int{1, 2}) { | |

| t.Error("2") | |

| } | |

| if !reflect.DeepEqual(copyUsingMod([]int{1, 2}, 1), []int{2, 1}) { | |

| t.Error("3") | |

| } | |

| } | |

| func TestCopyCopy(t *testing.T) { | |

| if !reflect.DeepEqual(copyUsingCopy([]int{1}, 0), []int{1}) { | |

| t.Error("1") | |

| } | |

| if !reflect.DeepEqual(copyUsingCopy([]int{1, 2}, 0), []int{1, 2}) { | |

| t.Error("2") | |

| } | |

| if !reflect.DeepEqual(copyUsingCopy([]int{1, 2}, 1), []int{2, 1}) { | |

| t.Error("3") | |

| } | |

| } | |

| var elems = []int{1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18, 19, 20} | |

| func TestModel(t *testing.T) { | |

| for h := 0; h < len(elems); h++ { | |

| if !reflect.DeepEqual(copyUsingMod(elems, h), copyUsingCopy(elems, h)) { | |

| t.Error("model") | |

| } | |

| } | |

| } | |

| func BenchmarkModClone(b *testing.B) { | |

| for i := 0; i < b.N; i++ { | |

| for h := 0; h < len(elems); h++ { | |

| copyUsingMod(elems, h) | |

| } | |

| } | |

| } | |

| func BenchmarkCopyClone(b *testing.B) { | |

| for i := 0; i < b.N; i++ { | |

| for h := 0; h < len(elems); h++ { | |

| copyUsingCopy(elems, h) | |

| } | |

| } | |

| } |

To focus on the topic of our discussion, it exercises only the copying part. And the results are

Not bad for such a small slice, since total cost is dominated by the memory allocation cost.

This change is part of the golang PR and hopefully will make golang repo a bit better.