Likely branches first.

Or why it pays off to know your odds.

How often do we think about which if branch should go first? And even when we do, do we use some scientific approach, gut feel or a stylistic approach? And finally, does it even matter? Let’s find out.

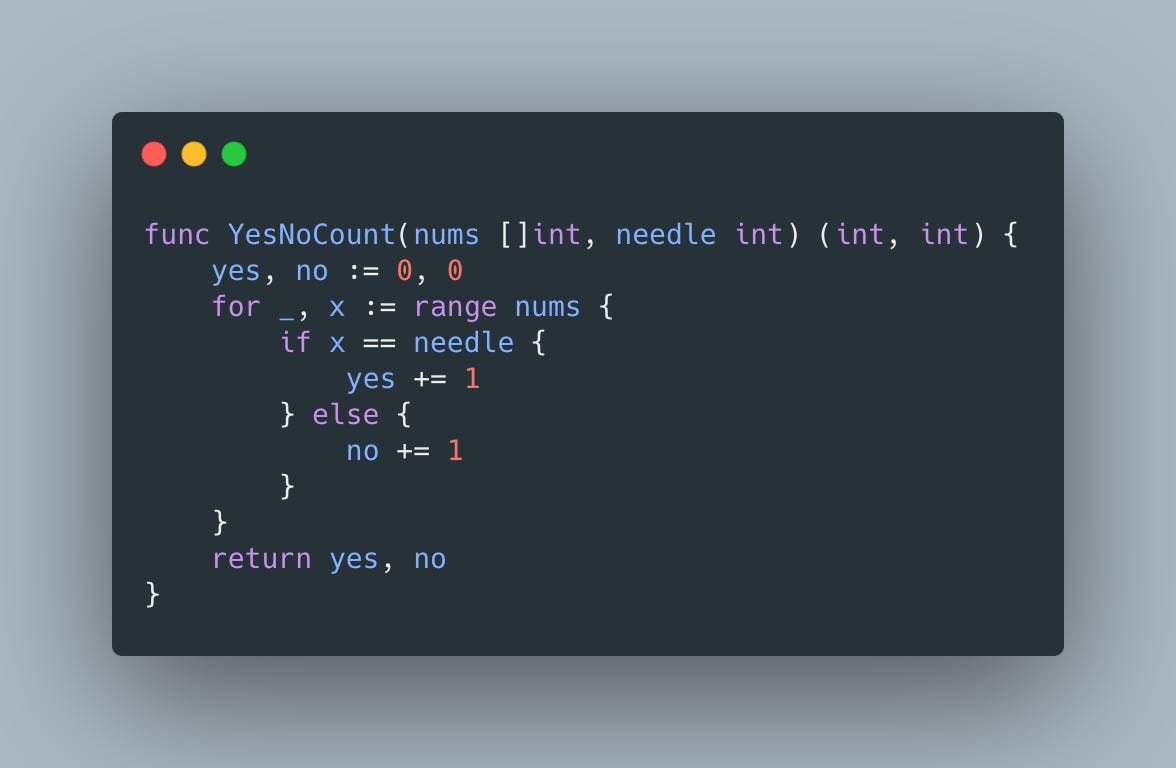

Let’s use a simple counting function that returns the number of nums that are equal and not equal to needle:

We’ll use 2 benchmarks to compare the time it takes to execute counting function when all elements are equal to needle

and when all elements are not equal to needle

The results below show that it’s almost 2X slower to count nos:

To understand why let’s take a look at the generated assembly. In particular at the lines handling if branches:

These lines show that after evaluating condition CMPQ R8, AX, for the true case we have to execute JNE 18, which, in theory, should be easy for a branch predictor to predict, and INCQ SI before we start another loop iteration with JMP 10. And in case of false, JNE 18 will jump to INCQ DI and start a new loop iteration with JMP 10.

So it looks like the same number of instructions is executed no matter which branch we take. So what gives?

Well, the difference is in JNE 18 effect - when condition is true, we don’t jump and continue processing following instructions taking advantage of the CPU pipeline

So while JNE 18 is being decoded, CPU can work on fetching INCQ SI and while it’s executed, INCQ SI will be decoded and JMP 10 fetched, but in case condition evaluates to false we have to jump to INCQ DI that hasn’t even been fetched yet and the work that was spent on fetching and decoding instructions for the true case will be discarded.

To avoid this wasted effort and improve efficiency, CPUs have a branch predictor used for speculative execution and the best way to see its impact is to employ the power of randomness

The benchmark above looks almost identical to ones we’ve used previously but with one small but important difference - the 1s and 0s are populated using random number generator. This small difference has a huge impact though

resulting in ~80% slowdown compared to a version with all 1s and ~70% compared to a version of all 0s.

Since branch predictor behavior is not always obvious, it’s best to use perf tool:

$ perf record ./binary

$ perf stat ./binary

Performance counter stats for './binary:

...

1,605,231,778 branch-misses # 16.04% of all branchesthat will helpfully print the percentage of branches branch predictor made a wrong call and high numbers indicate room for improvement.

So in the perf run case, the binary is the Rand one? and it had 16% misses. What was the Yes' and the No's ?