Linked list vs array list.

Or hidden cost of memory allocations.

One of the first data structures taught in most computer science courses is linked list that may be a great way to practice pointer shenanigans and also demonstrates importance of constant factors in practice. To make the discussion concrete and concise, let’s implement a trivial and not particularly useful List interface

as a singly linked list

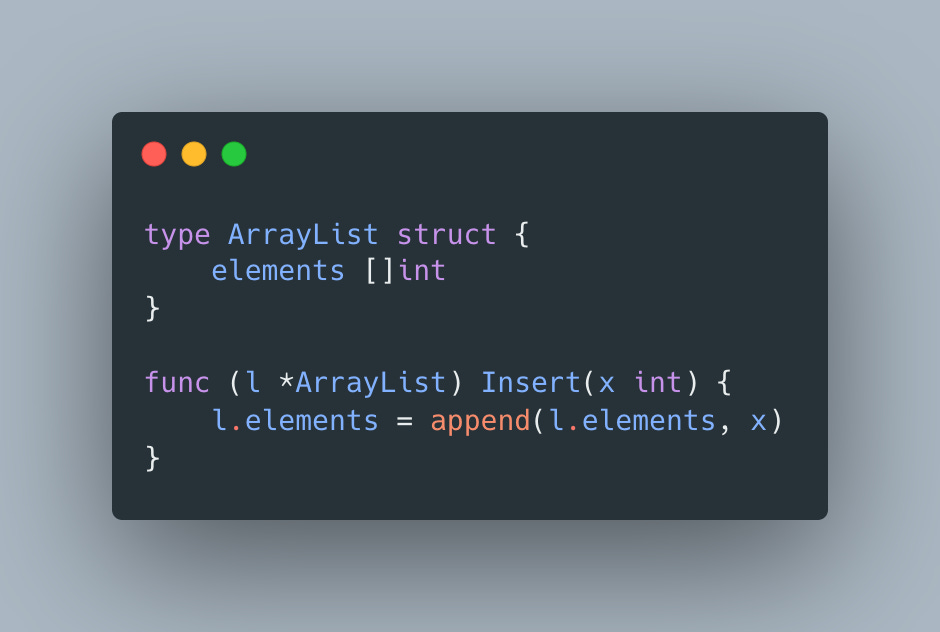

and an array list

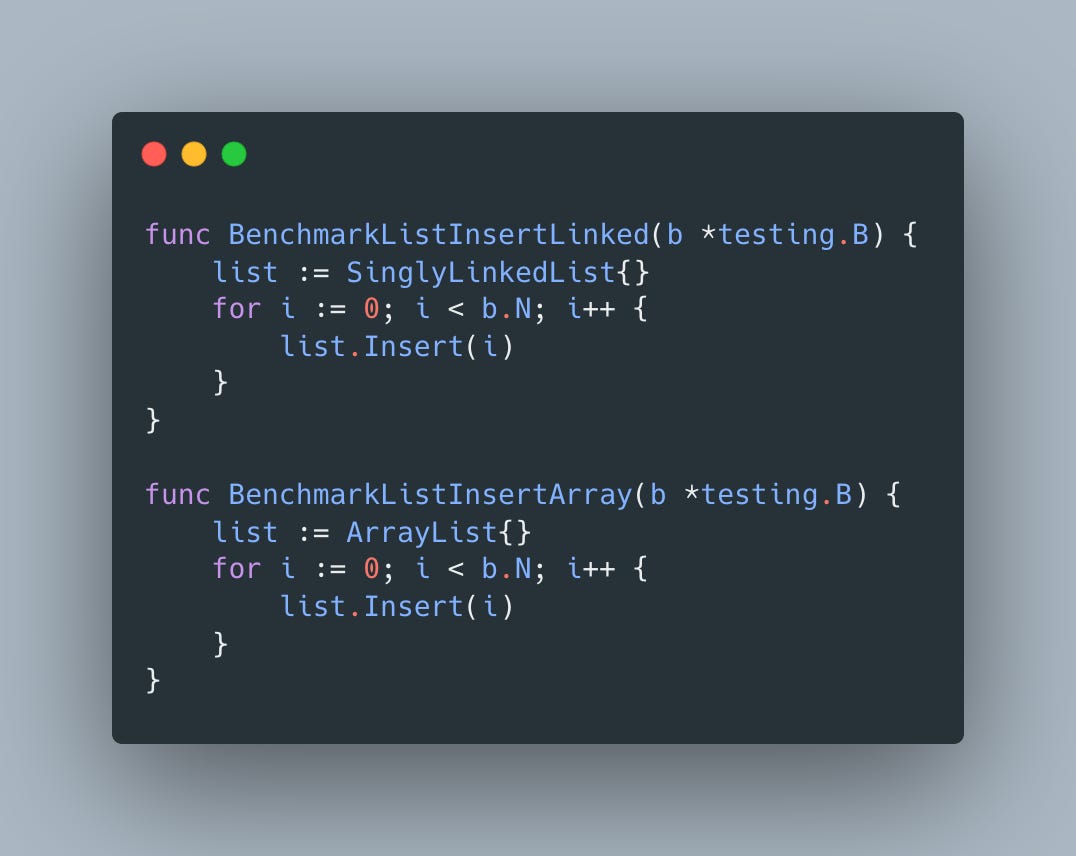

Since Go’s slices are backed by dynamic arrays that have amortized O(1) time cost for appends and singly linked lists have a true O(1) cost, it would be reasonable to expect both implementations take about the same time or singly linked list to perform better since it does not require expensive reallocations. Let’s put our hypothesis to the test using a straightforward benchmark

And the results are

For those of you who are familiar with true cost of memory access this probably does not come as a surprise, but array-backed implementation is almost 3X faster despite its occasional reallocations. Interestingly the cpu profile makes it look like array list inserts should be more expensive due memory copies involved in growing the slice

and memory profile creates even gloomier picture

So how come array list still significantly outperforms singly linked list implementation? The answer is uncovered by adding -benchmem flag to a benchmark execution command:

go test -bench=BenchmarkList -benchmem -memprofile memprofile.outthat produces following output

The answer is in the last column - each insert into linked list requires an allocation, whereas array list has 0, since allocations happen only when the slice needs to grow, which, assuming 2X growth factor, results in log(N) allocations to reach size of N.

This potentially surprising cost of memory accesses is the reason why usage of linked lists is highly discouraged in most modern programming languages, but as always don’t take anyone’s word for it - measure performance for your specific use cases to find the most suitable data structure.