Math to the rescue.

Or how theory helps in practice.

I often hear that school math is boring and has little to no use in practice and even though occasionally it may seem this way, in general it’s extremely useful. Some of these theoretical concepts include commutative and associative laws

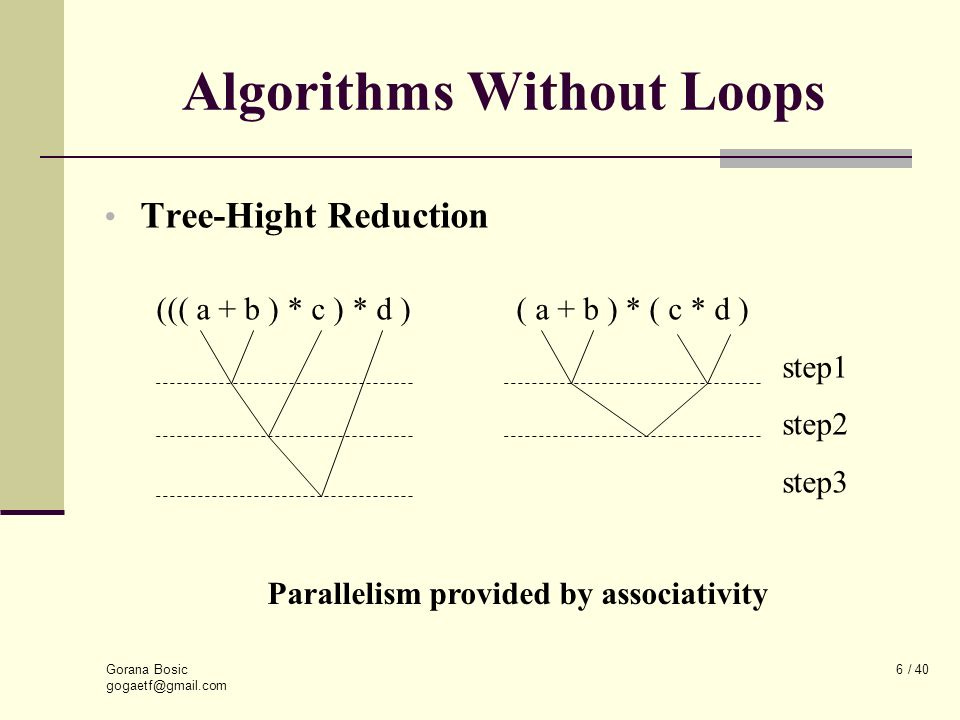

But how these properties can be used in practice? Since associativity effectively allows us to regroup computations, they can be performed in parallel and combined later on:

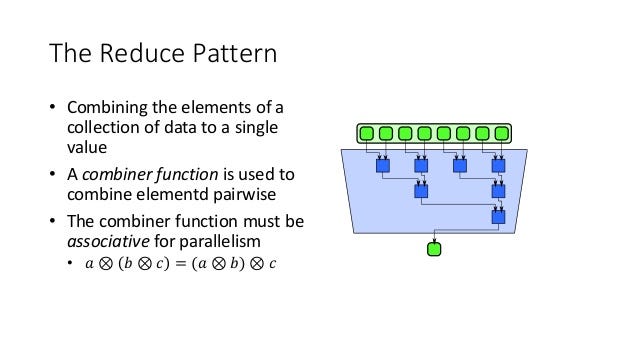

This is most commonly known as reduce pattern:

C++ 17 brought introduced execution policies that can be used to take advantage of these useful properties. For example, an extremely useful std::transform_reduce algorithm explicitly mentions that

The behavior is non-deterministic if

binary_op/binary_op2is not associative or not commutative.

It’s a bit tricky to benchmark algorithms that use execution policies, since they are essentially hints and do not enforce any specific implementation, but we’ll give it a try:

| #include <numeric> | |

| #include <vector> | |

| #include <execution> | |

| const int N = 1000000; | |

| std::vector<double> xs(N, 1.1); | |

| std::vector<double> ys(N, 1.1); | |

| double inner_product_1(const std::vector<double>& xs, const std::vector<double>& ys) { | |

| return std::inner_product(xs.cbegin(), xs.cend(), ys.cbegin(), 0); | |

| } | |

| template <class P> | |

| double inner_product_2(P&& policy, const std::vector<double>& xs, const std::vector<double>& ys) { | |

| return std::transform_reduce(policy, xs.cbegin(), xs.cend(), ys.cbegin(), 0); | |

| } | |

| double inner_product_3(const std::vector<double>& xs, const std::vector<double>& ys) { | |

| return std::transform_reduce(xs.cbegin(), xs.cend(), ys.cbegin(), 0); | |

| } | |

| static void bench_inner_product_1(benchmark::State& state) { | |

| double total = 0; | |

| for (auto _ : state) { | |

| benchmark::DoNotOptimize(total += inner_product_1(xs, ys)); | |

| } | |

| } | |

| BENCHMARK(bench_inner_product_1); | |

| static void bench_inner_product_2(benchmark::State& state) { | |

| double total = 0; | |

| for (auto _ : state) { | |

| benchmark::DoNotOptimize(total += inner_product_2(std::execution::seq, xs, ys)); | |

| } | |

| } | |

| BENCHMARK(bench_inner_product_2); | |

| static void bench_inner_product_3(benchmark::State& state) { | |

| double total = 0; | |

| for (auto _ : state) { | |

| benchmark::DoNotOptimize(total += inner_product_2(std::execution::par, xs, ys)); | |

| } | |

| } | |

| BENCHMARK(bench_inner_product_3); | |

| static void bench_inner_product_4(benchmark::State& state) { | |

| double total = 0; | |

| for (auto _ : state) { | |

| benchmark::DoNotOptimize(total += inner_product_2(std::execution::par_unseq, xs, ys)); | |

| } | |

| } | |

| BENCHMARK(bench_inner_product_4); | |

| static void bench_inner_product_5(benchmark::State& state) { | |

| double total = 0; | |

| for (auto _ : state) { | |

| benchmark::DoNotOptimize(total += inner_product_2(std::execution::unseq, xs, ys)); | |

| } | |

| } | |

| BENCHMARK(bench_inner_product_5); | |

| static void bench_inner_product_6(benchmark::State& state) { | |

| double total = 0; | |

| for (auto _ : state) { | |

| benchmark::DoNotOptimize(total += inner_product_3(xs, ys)); | |

| } | |

| } | |

| BENCHMARK(bench_inner_product_6); |

As expected default version of inner_product, which is sequential performed similarly to sequential version of transform_reduce, but, somewhat surprisingly, the version using parallel execution policy was the slowest one, as neither parallelism nor loop unrolling optimizations were applied:

Parallel unsequenced version has more freedom for vectorization:

The execution policy type used as a unique type to disambiguate parallel algorithm overloading and indicate that a parallel algorithm's execution may be parallelized, vectorized, or migrated across threads (such as by a parent-stealing scheduler).

so its assembly is using SIMD backend:

which is why it’s about 3.7X faster than the version using parallel execution policy. The default version of transform_reduce was compiled into vectorized code combined with loop unrolling

that was sufficient to outperform all other versions.

Takeaways?

think about the mathematical properties of operations you use and how they can be leveraged for parallel execution

C++ defaults can be surprisingly good and should be used, unless benchmarks give a reason to change them