Non-zero cost abstractions.

Or a story of a bad surprise.

Being abstract is something profoundly different from being vague … The purpose of abstraction is not to be vague, but to create a new semantic level in which one can be absolutely precise.

- Edsger Dijkstra

Let's take a look at the snippet of code performing exponentiation:

It would be reasonable to expect pow(x, 6) to be expanded into something like x * x * x * x * x * x or better yet:

which reduces the number of multiplications from 5 to 3.

Unfortunately, the generated assembly comes with a couple of bad surprises:

xhas to be converted from integer to floating-pointliteral

6is also stored as a floating-point number ind1less efficient floating-point version of

powis invoked, that comes with extra cost of function call overheadthe floating-point result of

powcomputation stored ind0has to be converted into return integer.

Let's compare this assembly with the one generated for the naive hand-written version:

Lo and behold we get the optimal assembly:

that is a little different from the version we've speculated above but still uses the optimal number of multiplications - 3.

This violates the principle of least surprise and one of the aspects of zero cost abstractions promised by C++:

Optimal performance: A zero cost abstractoin ought to compile to the best implementation of the solution that someone would have written with the lower level primitives. It can’t introduce additional costs that could be avoided without the abstraction.

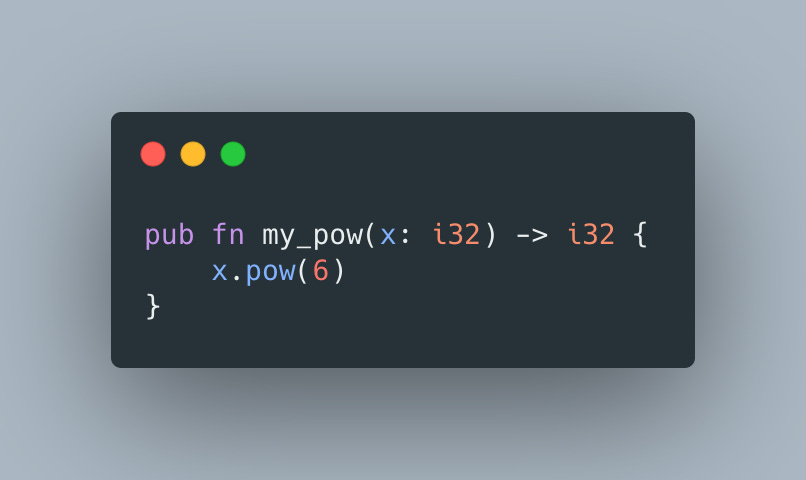

Rust is another popular programming language promising zero cost abstractions, so let's see how it would handle

And we've got a winner:

As such, Rust was able to inline pow invocation and generate almost optimal code that interestingly matches the approach we've discussed at the beginning of the article. It comes with an extra movl instruction that could be eliminated, but it's still a lot more efficient than the C++ version.

Moral of the story? Don't violate the principle of least surprise and either fulfill the promise of zero-abstraction or be explicit about the shenanigans that happen under the hood.