In previous post we've used Go benchmarks to measure performance difference in total age computation for array of structs and struct of arrays. As expected parallel arrays, being cache and prefetch-friendly, delivered significant performance boost depending on the size of the metadata competing with age field. The only thing we couldn't check is the impact of vectorization, since Go compiler still hasn't embraced SIMD instructions.

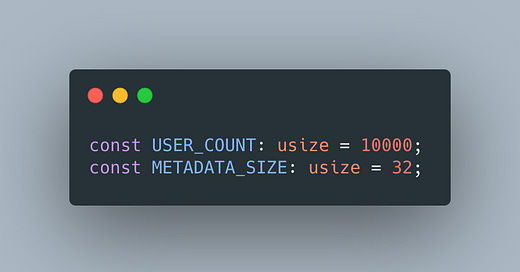

So to verify our hypothesis, this time we'll use Rust, as its compiler is using LLVM under the hood. To control the number of users and metadata size following constants are used:

The metadata is just an array of bytes with METADATA_SIZE size.

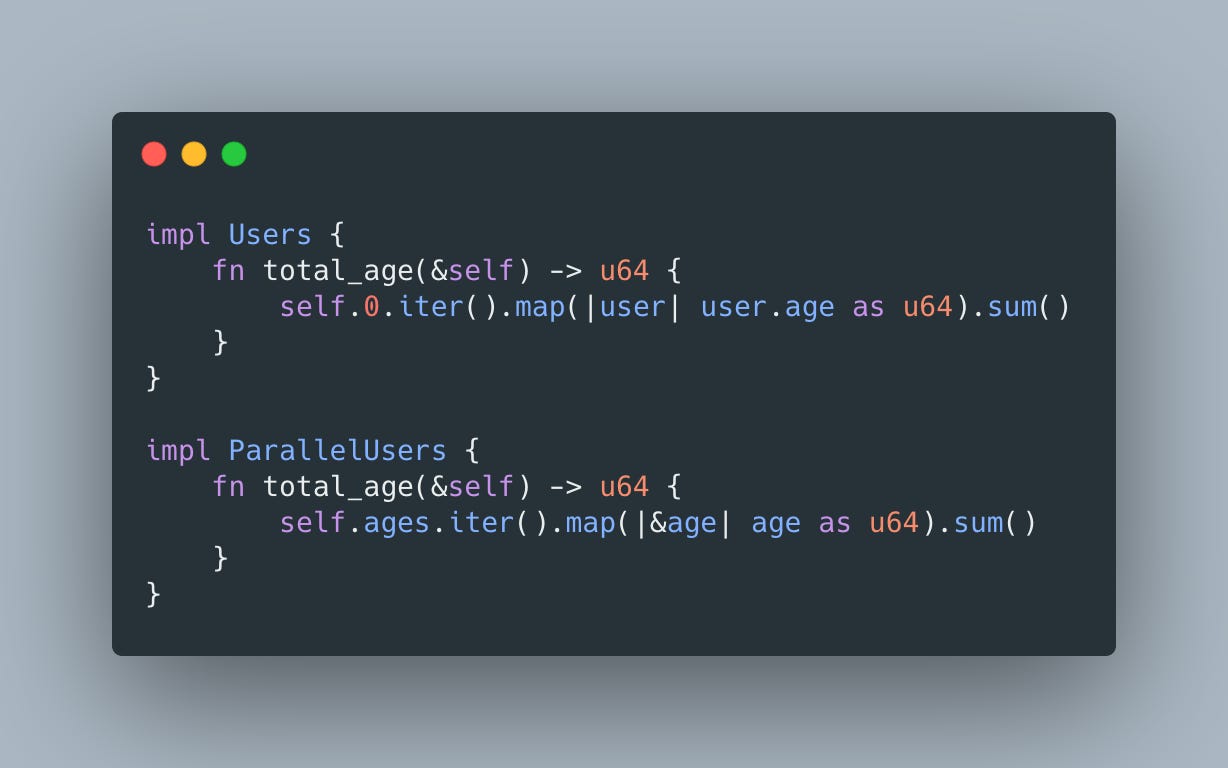

Array of structs is implemented as follows:

and parallel array:

For benchmarking we'll still be using code computing total age of users:

When metadata size is set to 0, both implementations produce efficient vectorized code:

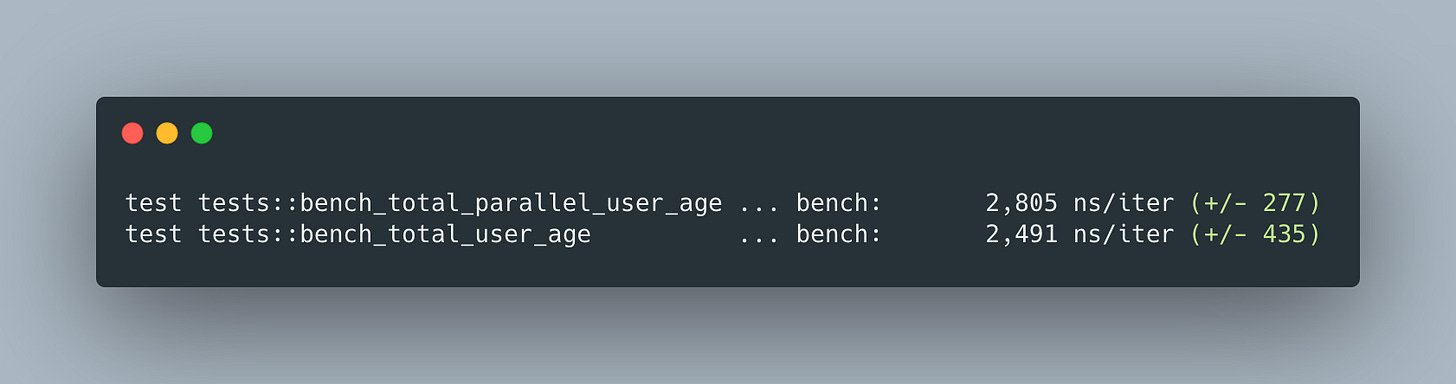

Now it's time to use benchmarks to see how they perform:

As expected, with no metadata, performance results are very close:

As soon as we increase metadata size (4 for example), vectorization is no longer possible for array of structs:

but performance results are still fairly close:

As soon as cache waste reaches 32, half of the cache line, performance drops by ~2X:

and by ~5X, similar to Go results, once metadata size reaches 64:

So what are the conclusions?

even though parallel array structure enabled vectorization, it hasn't had a significant performance impact;

memory bandwidth and cache efficiency played critical role on performance.