Reading input faster in Python.

Or readline vs readlines.

We’ve explored many different low-level optimizations at this point but CPUs have nothing to do until they get their data to process. That’s why memory access is a common bottleneck when it comes to number crunching. Similarly, since many programming content judges include input processing into consideration when comparing speed, reducing time taken to read the input may determine the winner.

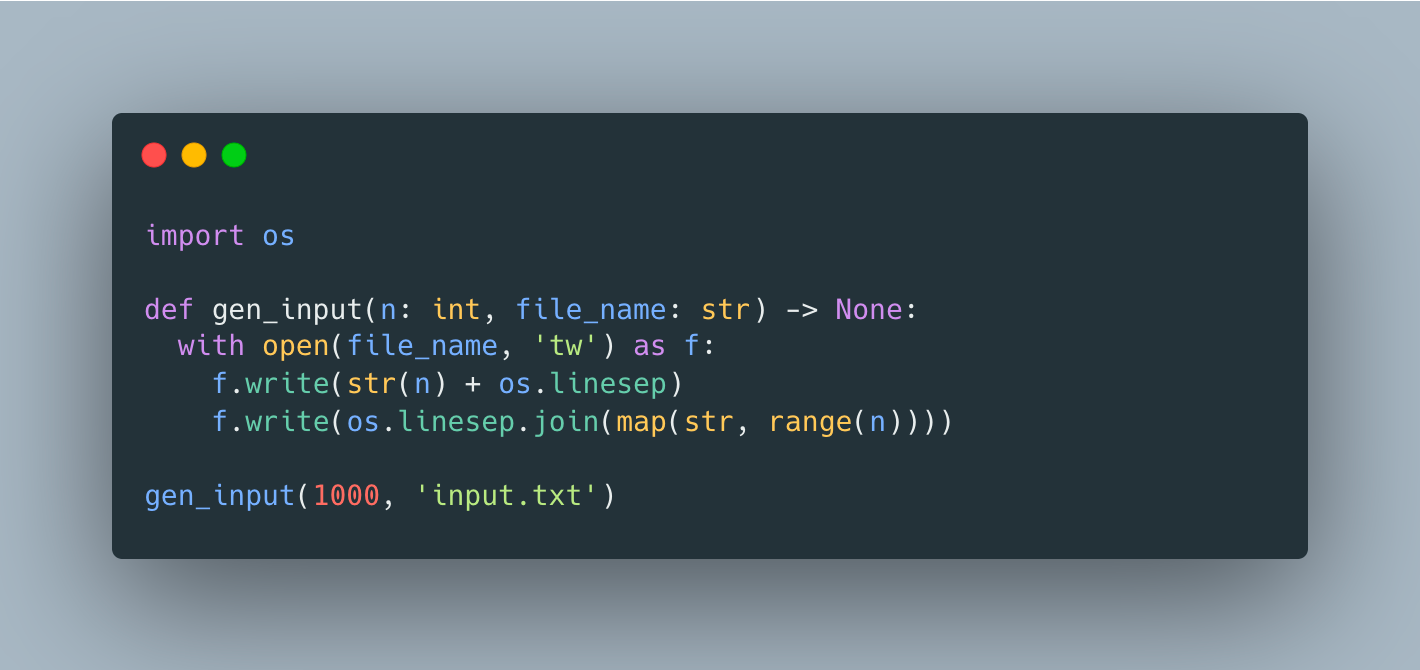

Let’s say we have a typical input format that starts with n indicating the number of numbers to read, followed by n other numbers. We can easily generate such input file using something like

I often see developers using input() to read input lines one by one, but to remove the need for input stream redirection, we’ll use a slightly better file.readline as its replacement here

Note that we’re using list comprehension to avoid list reallocations and associated overhead which we’ve discussed in a previous article.

Now, let’s do the same using file.readlines()

It’s a little easier to use since we technically don’t even need n and rely on readlines to return all of the lines automatically, but otherwise it seems like it should be very similar.

As always, let’s use runtime as a way to pick the winner.

So looks like readlines performed ~1.6X better, which is not entirely surprising given the overhead associated with individual readline invocations. This overhead may be even bigger for input(), so next time you’re dealing with large inputs, think about efficiency of the input APIs you’re using.

You can play with these functions in colab.