Recursive vs iterative merge sort.

Or when simplicity wins.

We’ve already discussed many benefits of recursion in the previous post

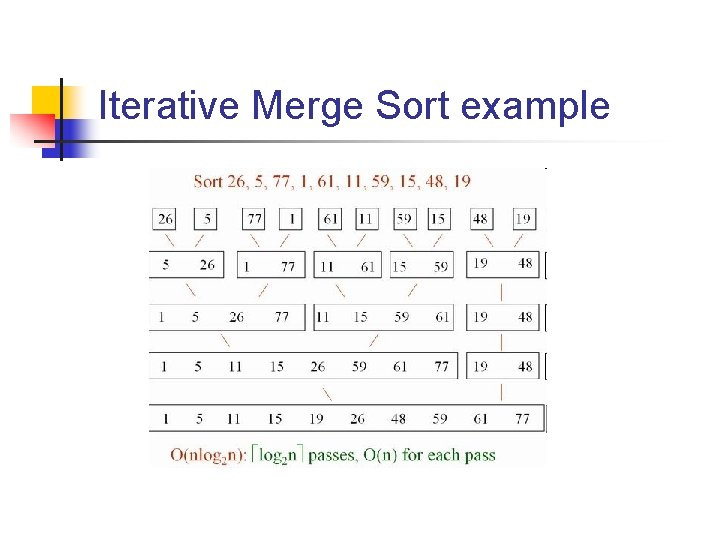

and we’ll continue our exploration by taking a look at recursive and iterative implementations of a fairly common and useful sorting technique - merge sort. In addition to its direct use, merge sort can also be used to solve other problems like counting number of inversions.

Since the both iterative and recursive versions rely on a merge subroutine, its performance is not relevant for today’s discussion and as such we’ll use a naive memory hungry implementation below

even though we could avoid allocations by performing in-place merge or reusing pre-allocated slice. We may revisit this in one of the future posts.

Having our power-horse, recursive merge sort implementation is fairly trivial

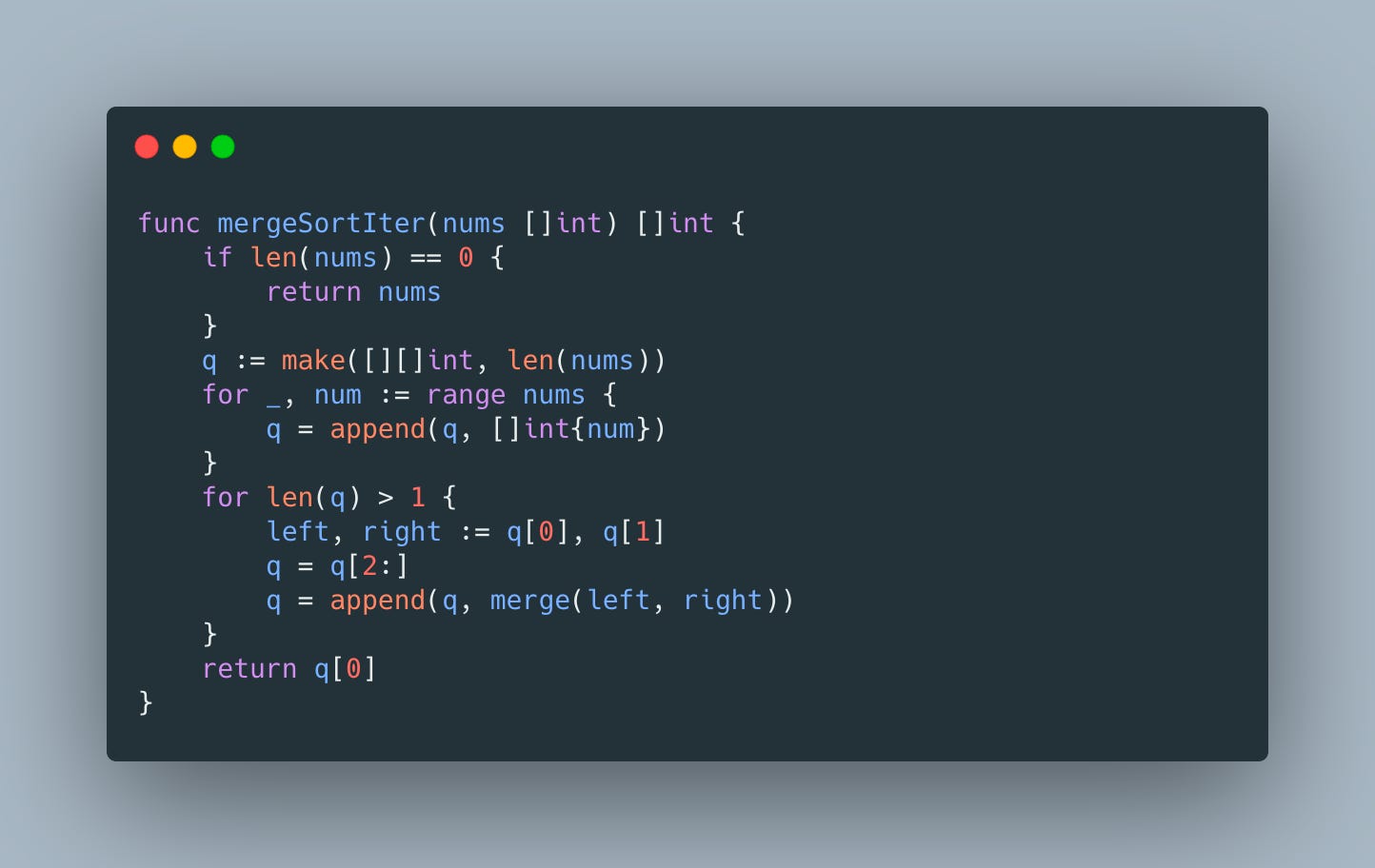

Iterative implementation takes a few more lines but is also very straight-forward

and intuitive - we simply enqueue all input elements as singleton slices and repeatedly dequeue 2 available ones for processing by merge

Finally everything is ready for benchmarking

and the results are

which suggest that our naive recursive merge sort implementation is almost 2.5X faster than its iterative version. 2X difference in the allocations count looks like a good suspect for the reason of this slow-down and CPU profile also agrees

Memory profile confirms that we are talking about 2X difference

causing additional 1.5GB allocations relative to recursive version.

Well, it looks like we’ve encountered yet another example of inefficient memory usage being a culprit of a performance regression and in one of the next posts we’ll try to address this.