reinterpret_cast

Or how to achieve more with less.

A few days ago I was looking for ways to speed up one of our performance sensitive components. It leverages time locality by caching computation results using LRU strategy and enjoyed a lot of engineering cycles making it super fast and efficient. As I couldn’t find a anything meaningful to speed up in the cache itself, I started looking at how it’s used and the way cache key is computed caught my attention. For legacy reasons, the type of the key has to be std::string but, since the majority if cached entries are “intern”ed, their addresses serve as cache keys. In other words we have a

void put(std::string key, Blob blob);

interface for cache and it’s used as following

cache.put(getUniqueKeyOf(blob), blob);

getUniqueKeyOf(blob) would basically take the address of the blob and convert it to string

return folly::to<std::string>((uintptr_t)blob.getAddress());

So far so good. Internally folly is using a table but the icicle view was making it perfectly clear that there was a lot going on under the hood

So I started to go back to first principles and think about the actual goal we need to achieve here. Keys definitely have to be unique, since there must be a way to distinguish different blobs. Do they have to be readable though? Not really, nobody ever looks at them and they would be different from run to run so preserving an actual address does not seem to buy us much. How can we leverage this information?

Before we start experimenting, let’s establish a baseline, so I wrote a benchmark and saved its results

31.21ns 32.04M

Now, it’s show time. The first naive attempt was to use simply stuff address bytes one by one into the the resulting string interpreted as chars. This had a noticeable performance improvement:

24.02ns 41.63M

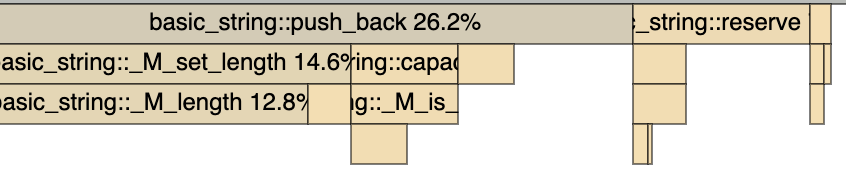

and unsurprisingly made push_back the bottleneck

Well, we know that the size of the string will not be larger than the number of bytes in the address, so why don’t we std::string::reserve the space in advance? It improved performance further

17.90ns 55.86M

but Icicle view showed that the push_back and reserve are still a problem

If only we could skip push_backs and reserves and use raw character array… But we can! std::string has a constructor that accepts const char*, so we can write all bytes into our own character array allocated on the stack and pass it to the final string. Just don’t forget to use an overload that takes an array length as a second parameter, since some of the bytes can be 0s which string’s constructor would interpreter as a NULL terminator and ignore the rest of the bytes otherwise. As expected it improved performance even further:

10.73ns 93.20M

At this point, I was pretty happy with 3X improvement and was about to submit a change for review, but suddenly I realized that I’m basically writing an inefficient version of a memcpy… Why don’t I let the compiler do this for me?

This one-liner got the benchmark numbers to

1.01ns 993.14M

Getting ~30X improvement I finally stopped and submitted code for review. Just out of curiosity I also took a look at the generated assembly for uintptr_t instantiation:

As you can see it’s super simple and does not involve any allocations - we just need to copy the content of the address to the string storage, add NULL terminator and set string size.

reinterpret_cast is a sharp tool and should only be used when appropriate. It can be extremely useful for zero-copy serialization/deserialization by exposing raw mmaped memory through reinterpreted iterators.

btw, I am confused of "preserving an actual address does not seem to buy us much". Did you eventually used the address of the blob to write it as/to string?

does this rely on SSO of std::string?