Saving space with dictionary arrays.

Or replacing expensive types with cheap ones.

Even though the first resource that comes to mind when dealing with performance is CPU, memory is a common reason for CPU not being able to reach its full potential. Since many workloads involve processing and/or storing strings, many interesting data structures and algorithms have been developed to deal with different use-cases.

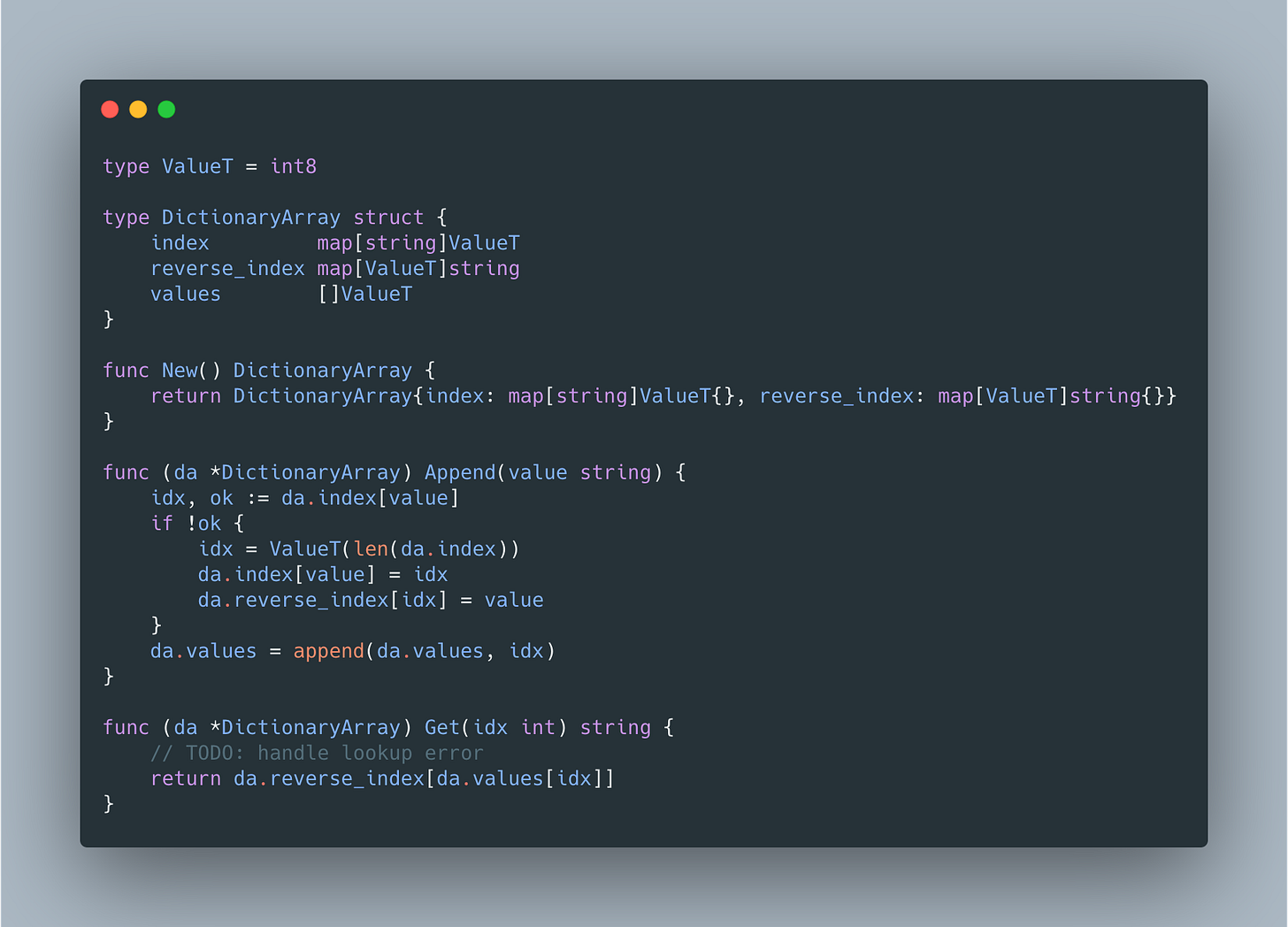

One of such data structures is a DictionaryArray. There are many ways to implement it, but one of the simplest ones and the one that supports online processing in Go can look like:

ValueT depends on the number of unique string values we expect - low-cardinality value sets are compressed best, e.g. state or country names, etc.

There are also additional ways to reduce memory usage by getting rid of maps and replacing them with a slice of keys that can additionally be sorted to enable binary search-based lookups.

But even current implementation delivers significant memory reduction. For benchmarking we'll use the names of states conveniently provided by gofakeit package:

The numbers for DictionaryArray look as follows:

and for a slice:

so DictionaryArray uses only ~5.9% of memory used by slice. Not bad for a naive implementation, which is why more efficient implementations are used in such performance-sensitive projects like Apache Arrow we might explore further in the following posts.