To bit or not to bit.

Or don't rely on just your intuition.

One of the C++’s superpowers is template specialization that allows optimizing memory usage and/or runtime performance for individual data types. A well known example is usage of a bitset for boolean vectors. Even though it has a number of issues, using a single bit instead of an a byte, in case of char, or multiple bytes in case of other integer types is very tempting. Such drastic memory usage combined with knowledge that memory is the usual suspect when it coms to processing bottlenecks, it’s easy to assume that we can expect significant speedups from such specialization. What can possibly go wrong? Shouldn’t we trust our gut after all?

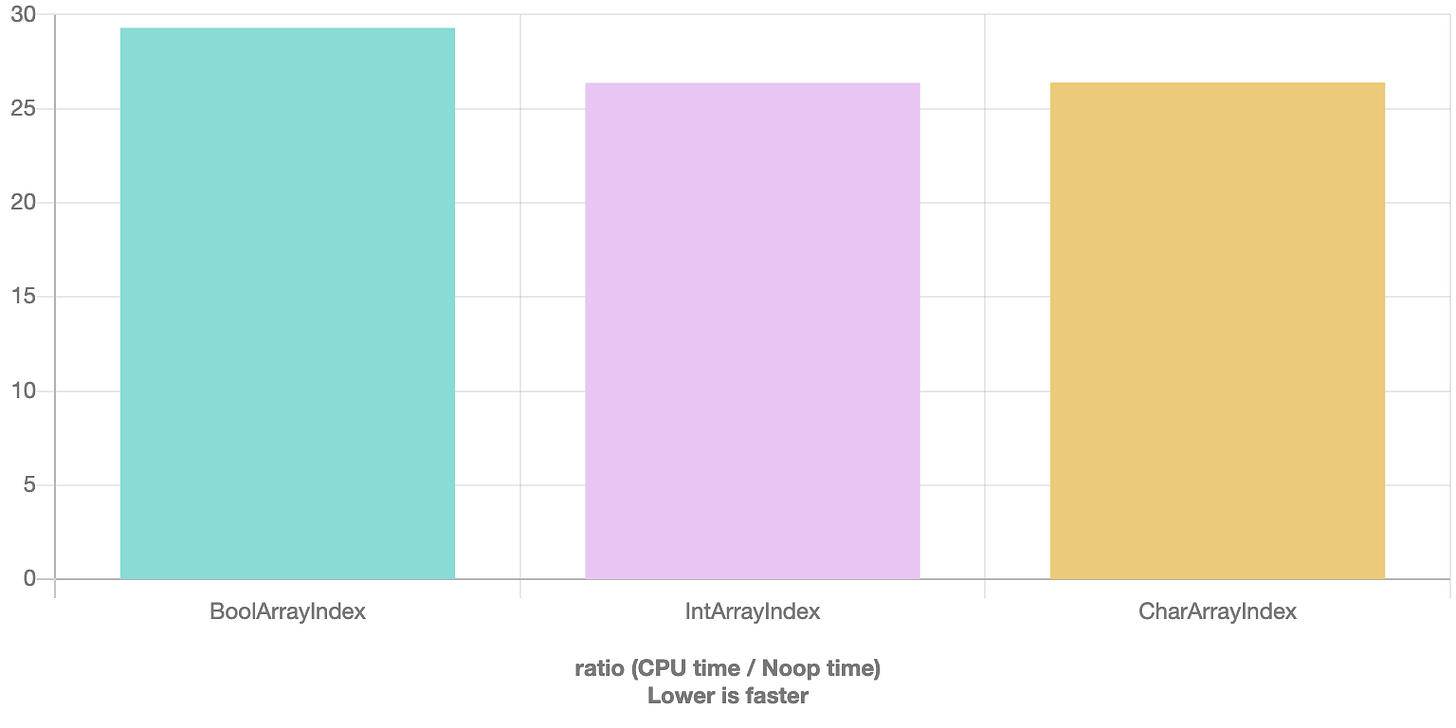

Let’s put our hypothesis to the test. For this we’ll check 2 common usage patterns. First, we’ll take a look at random index accesses of boolean, integer and char vectors:

And even though the results are fairly close, the int and char vectors are ~10% faster

The results are likely to be explained by the extra instructions required for selecting the bit at the index

For char vectors the same code results in

that is clearly shorter and can explain the speedup.

But what if we need to unconditionally traverse the entire vector

Intuitively, it seems like because we can pack a lot more items into the cache, bool vector should delivery some impressive speedups, but that’s when we get the second and much bigger surprise - sum of items in bool array is 27X slower!

And the answer is in the assembly. The bool vector assembly

is vectorized and unrolled for char and int versions

That’s not to say boolean vectors are always evil, especially in memory constrained environments, but it showcases importance of verifying our assumptions with measurements.

I think the whole Proxy object returns from the op[] is not going to help the compiler for vectorization/simd