Using golang bit trick for fast bucket selection.

Or number of instructions vs their cost.

Hashing rules the world. From hashtables to fast substring search and efficient data synchronization using Merkle trees, hashing is a humble secret sauce that is easy and cheap to use. Today, we’re going to look at one of the trick that golang map implementation is using to speed up bucket computation.

Since the first step involved in lookups is bucket computation, its cost is very important. Usually, once hash is computed, a modulo operator is used to map its value into one of the buckets - hash % bucket_count. But this operator is very expensive, but we know that % power_of_two is equivalent to & with a mask of trailing zeroes. For example, hash % 4 (0b0100 in binary) is equivalent to hash & 0b11 which is why & 1 is frequently used as an alternative of % 2.

That’s why golang instead of bucket count is stores a log2 of the bucket count in hmap.B

and its bucketShift function is using the above bit trick

Intuitively it makes sense, but since in performance “trust but verify” is one the core principles, let’s put this to a test.

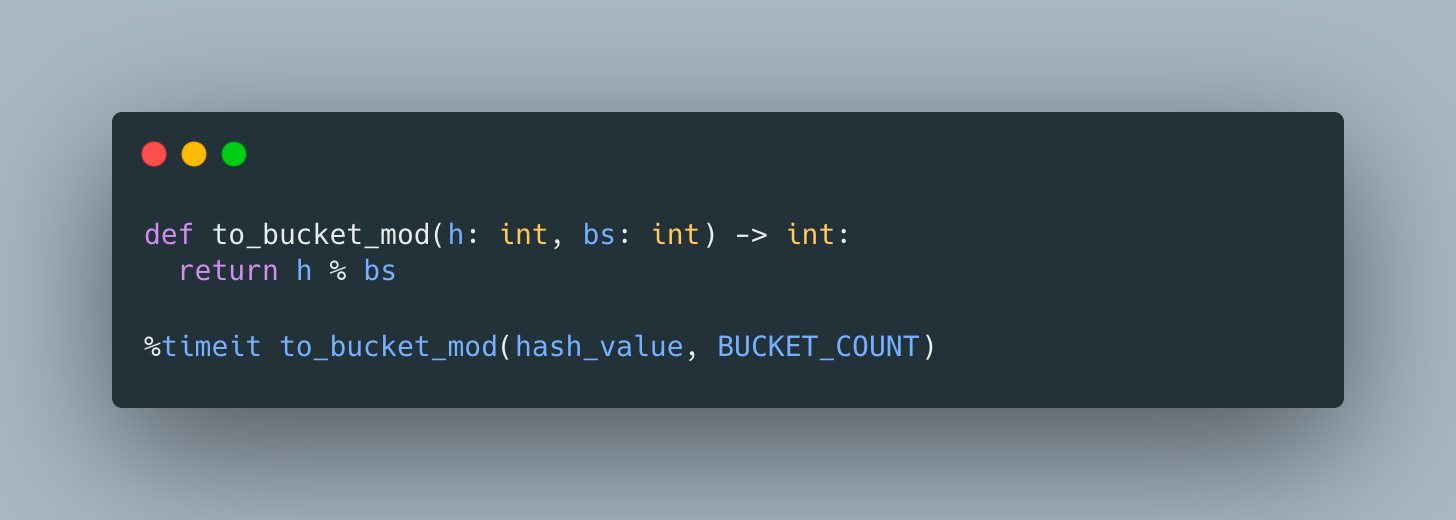

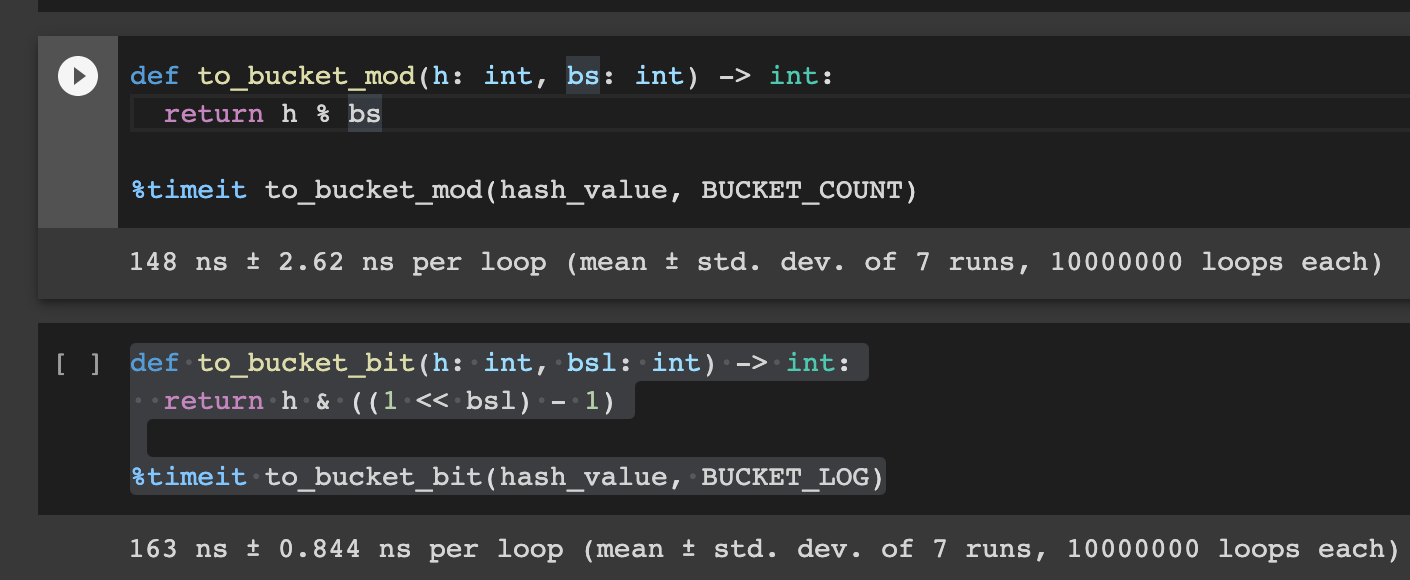

First, let’s see if this helps in Python and compare

and

The result was somewhat surprising

As is often the case, lack of direct mapping from source to assembly, results in more Python bytecode instructions which have to be executed by Python VM. The cost of executing bytecode instructions dominates and we end up with ~10% regression.

You can explore this code more in codelab.

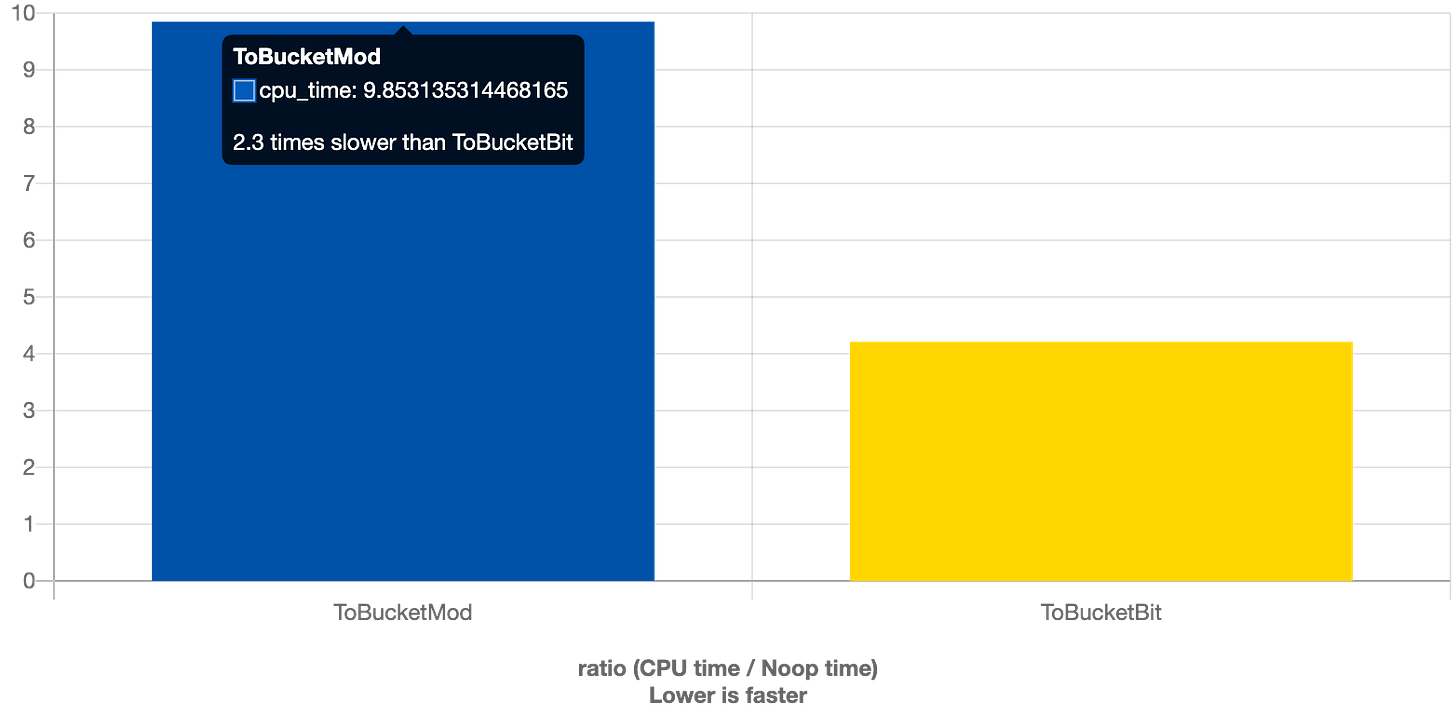

Ok, no luck with Python, but what about C++?

And it’s a success - 2.3X speedup!

This serves as a reminder that we should be mindful about copying code patterns from one programming language to another, especially when it comes to languages with different runtimes and execution models.

Developers that can leverage optimizing compilers, often benefit from such optimizations for free, but it’s only possible if compiler can prove that bucket count is a power of 2 at compile time, which isn’t the case when for dynamic data structures like hashtables, so that’s our opportunity to leverage our domain knowledge for better performance.