Vectorizing utf8_length_from_utf32.

Or vectorization != better performance.

Daniel Lemire is a unique instance of first-principle thinking in the world of performance. Instead chasing incremental improvements over existing algorithms he uses hardware characteristics to establish theoretical limits and then designs an algorithms to hit them. The most famous example of his work is a simdjson library. To better appreciate his work, recently I’ve been reading code of Daniel’s other libraries and stumbled upon a function to calculate UTF8 length for the UTF32 encoded string.

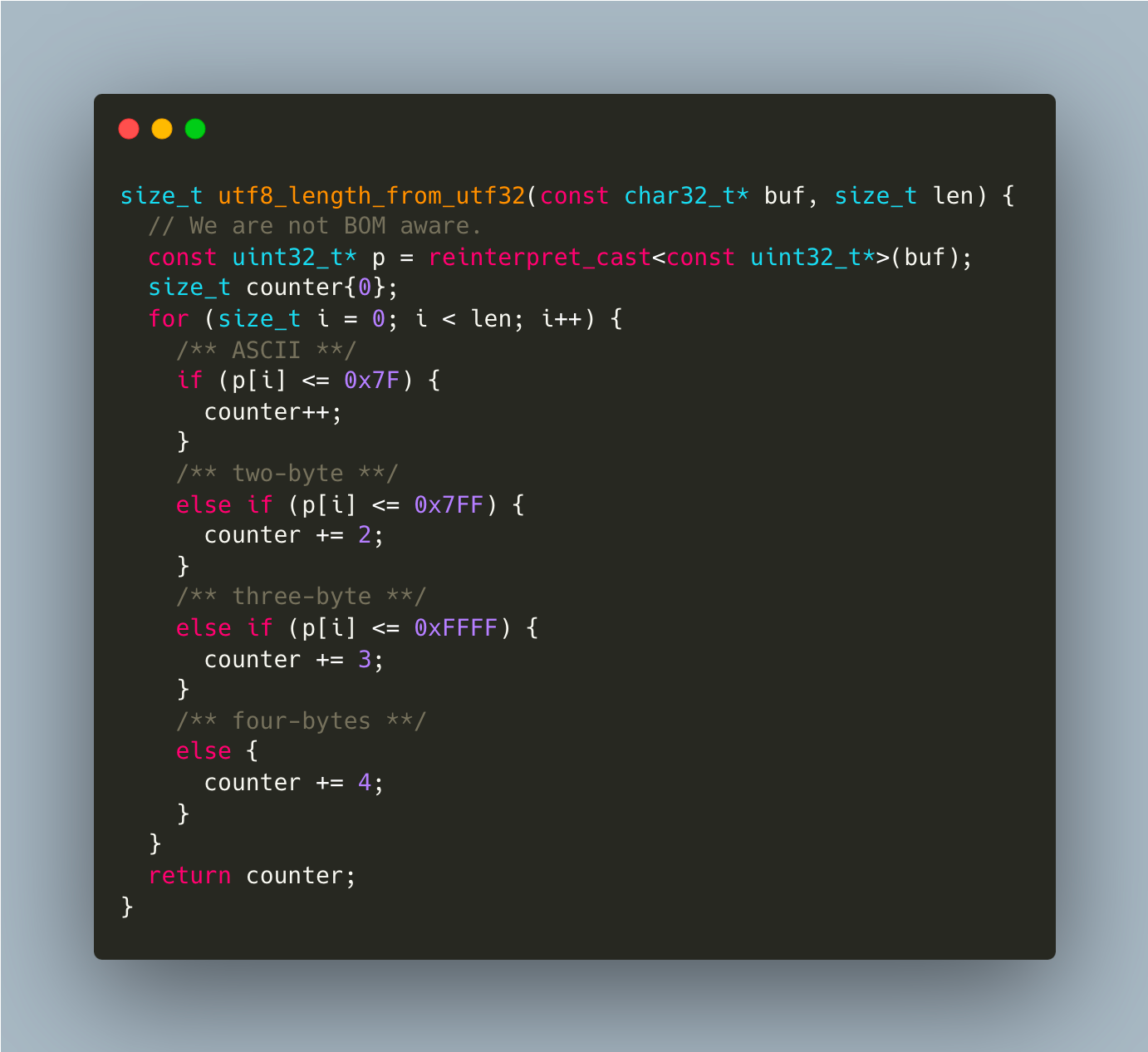

It’s not a secret that branching is not particularly performance friendly - it complicates life to optimizer and creates more work for branch predictor. This code is translated into very much expected

with cmp and branch used for each if.

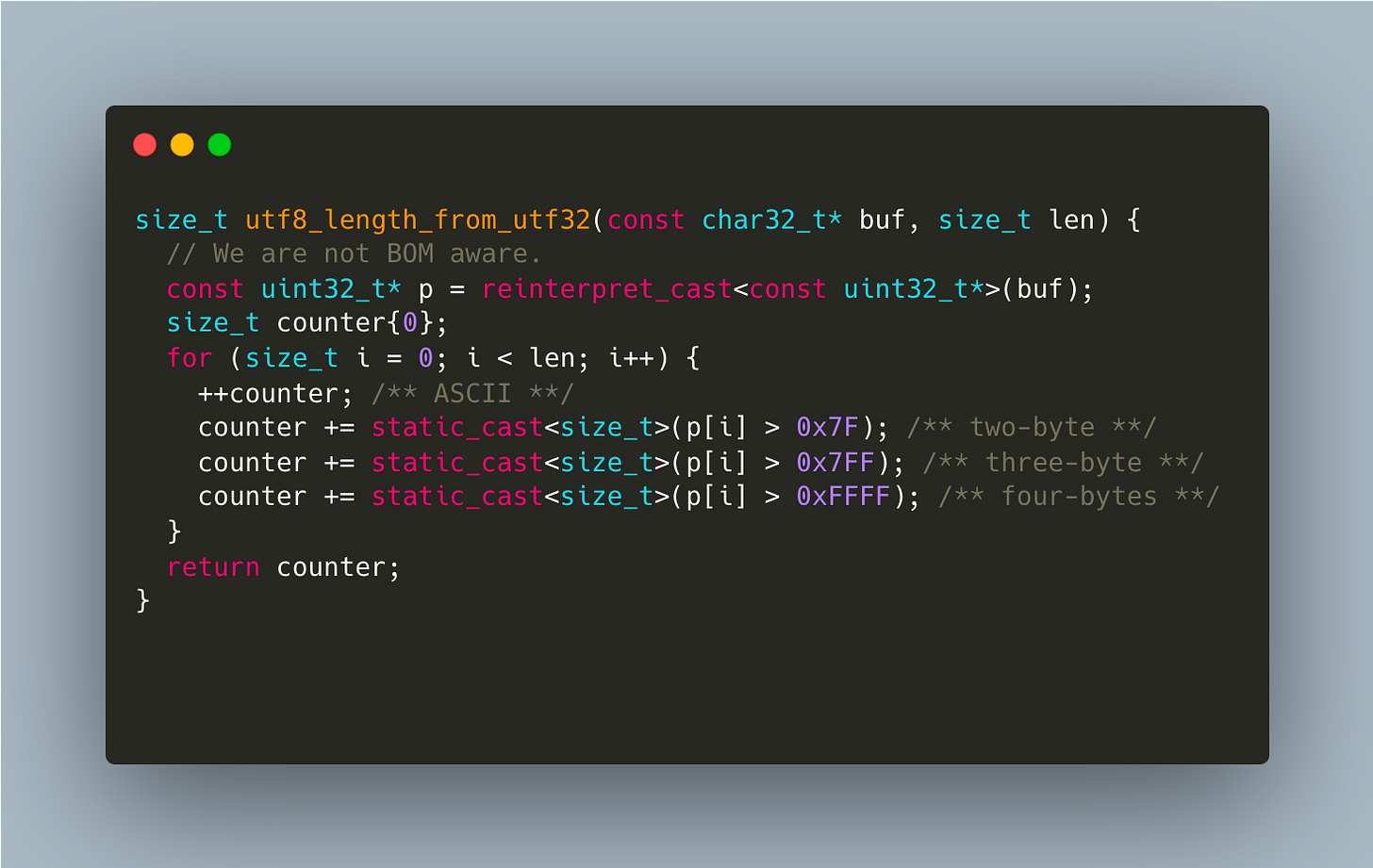

After staring at this code for a few seconds I’ve noticed that it follows a fairly predictable pattern - each chat32_t goes through a ladder with each step adding +1 to final utf8 length. It’s easier to see this in code

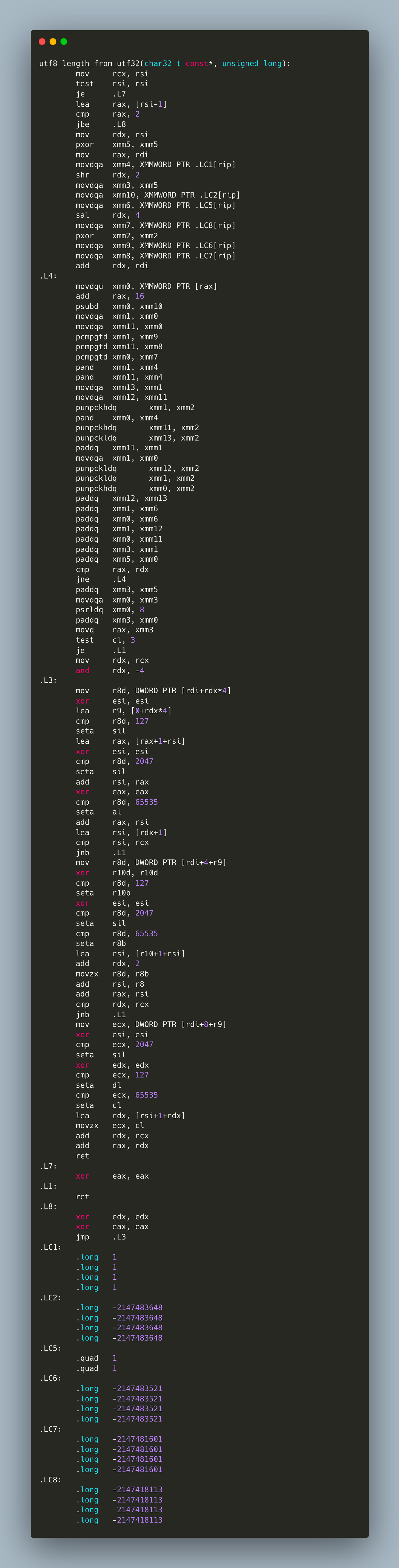

Note that this implementation is branchless - we just add 0 or 1 depending on whether current character exceeds certain threshold. This straightforward implementation is much easier for compiler to grok, so it figures that it’s possible to vectorize it

Sure, it’s more verbose but we are now using the power of SIMD, right?

Let’s see

| #include <cstddef> | |

| #include <cstdint> | |

| #include <cuchar> | |

| size_t utf8_length_from_utf32(const char32_t* buf, size_t len) { | |

| // We are not BOM aware. | |

| const uint32_t* p = reinterpret_cast<const uint32_t*>(buf); | |

| size_t counter{0}; | |

| for (size_t i = 0; i < len; i++) { | |

| /** ASCII **/ | |

| if (p[i] <= 0x7F) { | |

| counter++; | |

| } | |

| /** two-byte **/ | |

| else if (p[i] <= 0x7FF) { | |

| counter += 2; | |

| } | |

| /** three-byte **/ | |

| else if (p[i] <= 0xFFFF) { | |

| counter += 3; | |

| } | |

| /** four-bytes **/ | |

| else { | |

| counter += 4; | |

| } | |

| } | |

| return counter; | |

| } | |

| size_t utf8_length_from_utf32v(const char32_t* buf, size_t len) { | |

| // We are not BOM aware. | |

| const uint32_t* p = reinterpret_cast<const uint32_t*>(buf); | |

| size_t counter{0}; | |

| for (size_t i = 0; i < len; i++) { | |

| ++counter; /** ASCII **/ | |

| counter += static_cast<size_t>(p[i] > 0x7F); /** two-byte **/ | |

| counter += static_cast<size_t>(p[i] > 0x7FF); /** three-byte **/ | |

| counter += static_cast<size_t>(p[i] > 0xFFFF); /** four-bytes **/ | |

| } | |

| return counter; | |

| } | |

| const char32_t text[] = U"eckwd4c7cu47r2wfeckwd4c7cu47r2wfeckwd4c7cu47r2wfeckwd4c7cu47r2wfeckwd4c7cu47r2wfeckwd4c7cu47r2wfeckwd4c7cu47r2wfeckwd4c7cu47r2wf"; | |

| // const char32_t text[] = U"MajiでKoiする5秒前MajiでKoiする5秒前MajiでKoiする5秒前MajiでKoiする5秒前MajiでKoiする5秒前MajiでKoiする5秒前MajiでKoiする5秒前MajiでKoiする5秒前MajiでKoiする5秒前"; | |

| static void BH_length(benchmark::State& state) { | |

| const auto text = (const char32_t *)state.range(0); | |

| for (auto _ : state) { | |

| benchmark::DoNotOptimize(utf8_length_from_utf32(text, 129)); | |

| } | |

| } | |

| BENCHMARK(BH_length)->Arg((int64_t)text); | |

| static void BH_lengthVec(benchmark::State& state) { | |

| const auto text = (const char32_t *)state.range(0); | |

| for (auto _ : state) { | |

| benchmark::DoNotOptimize(utf8_length_from_utf32v(text, 129)); | |

| } | |

| } | |

| BENCHMARK(BH_lengthVec)->Arg((int64_t)text); |

And the results are

What a bummer! For regular ASCII string we take a significant performance hit. For mixed unicode strings results are not as bad

but still not something we were hoping for.

My attempt landed in ada-url as part of https://github.com/ada-url/idna/pull/33 but the lesson here is - don’t assume SIMD is always magically better and trust only benchmarks using representative inputs.

You can look for the answer “why” using tools like uiCA or reading hardware manuals but at the end of the day what matters is measured result.

Loved this one! Surprising to see a case where an obvious Simd application ends up much slower