We still know our code better than compilers.

Or a case of unnecessary CPU lock instruction.

Compilers brought a huge productivity and performance boost thanks to their ability to translate high-level abstractions into highly-optimized low-level instructions. In fact they are so good at optimizing our code, that we just expect them to understand our code even better than us and for the most part it's a reasonable expectation.

At the same time it's important to remember that we can still reason better about our code and when we expect a particular optimization, we should always verify it by checking out the generated assembly.

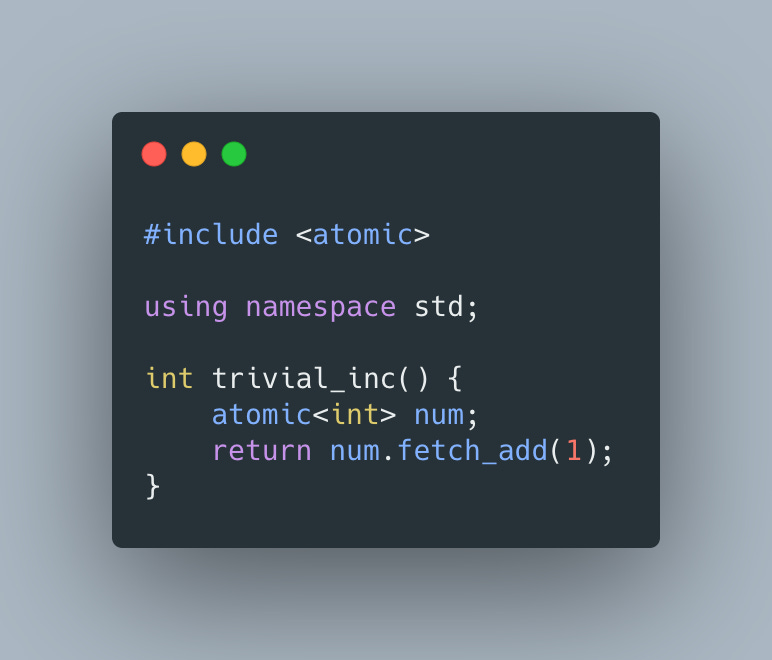

Take following code snippet as an example:

Even though num is an atomic int, we can easily convince ourselves that since num is a local variable that does not escape trivial_inc function and is initialized to 0, we'd expect compiler to turn this code into something like

which can be further simplified to

But here is what clang with -O3 is producing:

While it's able to remove most atomic<int> traces, notice that it's still updating its value using unnecessary lock xadd instruction.

Surely Rust with all its superior zero-cost abstractions will not repeat the same mistake, or would it? Let's check

Oh no, we can see the same

Oh well, it's nice to know that we should expect more wins in the future from our compilers.

To wrap up, I had to check what Go would do in this case

And oh no:

So in addition to using unnecessary LOCK, Go's compiler also allocates the num on the heap with CALL runtime.newobject(SB) 😞

Well, nothing is perfect and I still admire compilers, but for things that matter, we should always look under the hood to see if there are no surprises.